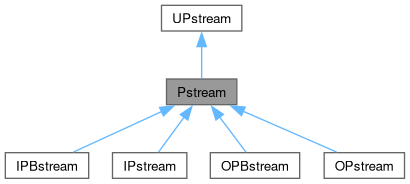

Inter-processor communications stream. More...

#include <Pstream.H>

Public Member Functions | |

| ClassName ("Pstream") | |

| Declare name of the class and its debug switch. | |

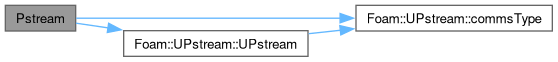

| Pstream (const UPstream::commsTypes commsType) noexcept | |

| Construct for communication type with empty buffer. | |

| Pstream (const UPstream::commsTypes commsType, int bufferSize) | |

| Construct for communication type with given buffer size. | |

| template<class T> | |

| FOAM_DEPRECATED_FOR (2025-03, "broadcast() or broadcastList()") static void scatterList(UList< T > &values | |

| The inverse of gatherList, but when combined with gatherList it effectively acts like a partial broadcast... | |

| Public Member Functions inherited from UPstream | |

| ClassName ("UPstream") | |

| Declare name of the class and its debug switch. | |

| UPstream (const commsTypes commsType) noexcept | |

| Construct for given communication type. | |

| commsTypes | commsType () const noexcept |

| Get the communications type of the stream. | |

| commsTypes | commsType (const commsTypes ct) noexcept |

| Set the communications type of the stream. | |

Static Public Member Functions | |

| template<class Type> | |

| static void | broadcast (Type &value, const int communicator=UPstream::worldComm) |

| Broadcast content (contiguous or non-contiguous) to all communicator ranks. Does nothing in non-parallel. | |

| template<class Type, unsigned N> | |

| static void | broadcast (FixedList< Type, N > &list, const int communicator=UPstream::worldComm) |

| Broadcast fixed-list content (contiguous or non-contiguous) to all communicator ranks. Does nothing in non-parallel. | |

| template<class Type, class... Args> | |

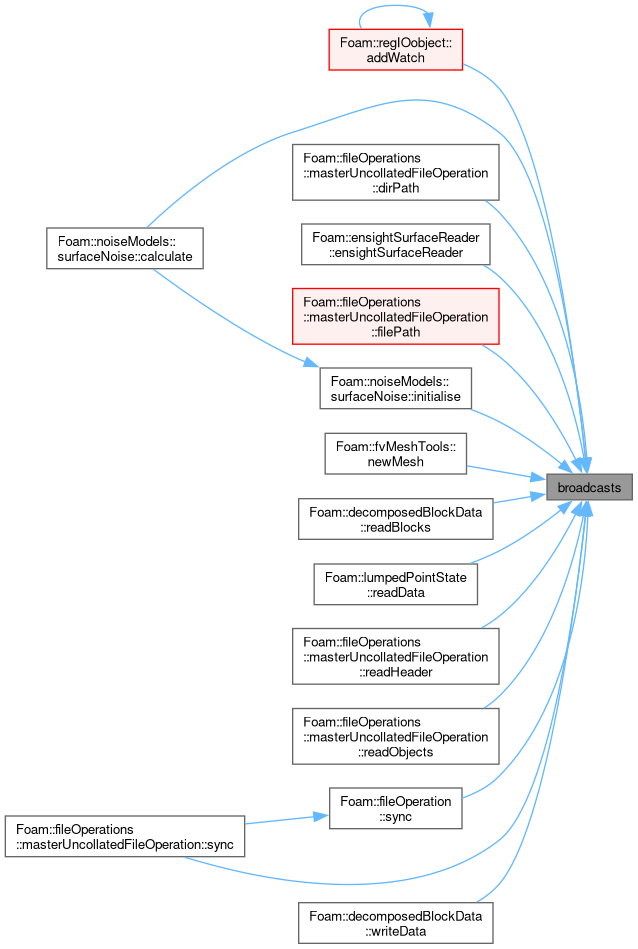

| static void | broadcasts (const int communicator, Type &value, Args &&... values) |

| Broadcast multiple items to all communicator ranks. Does nothing in non-parallel. | |

| template<class ListType> | |

| static void | broadcastList (ListType &list, const int communicator=UPstream::worldComm) |

| Broadcast list content (contiguous or non-contiguous) to all communicator ranks. Does nothing in non-parallel. | |

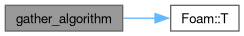

| template<class T, class BinaryOp, bool InplaceMode> | |

| static void | gather_algorithm (const UPstream::commsStructList &comms, T &value, BinaryOp bop, const int tag, const int communicator) |

| Implementation: gather (reduce) single element data onto UPstream::masterNo(). | |

| template<class T, class BinaryOp, bool InplaceMode> | |

| static bool | gather_topo_algorithm (T &value, BinaryOp bop, const int tag, const int communicator) |

| Implementation: gather (reduce) single element data onto UPstream::masterNo() using a topo algorithm. | |

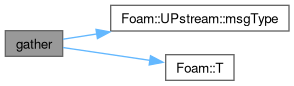

| template<class T, class BinaryOp, bool InplaceMode = false> | |

| static void | gather (T &value, BinaryOp bop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

Gather (reduce) data, applying bop to combine value from different processors. The basis for Foam::reduce(). | |

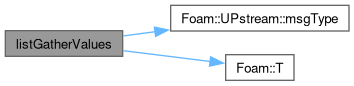

| template<class T> | |

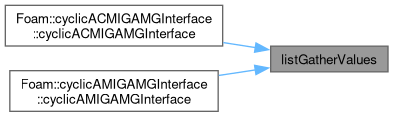

| static List< T > | listGatherValues (const T &localValue, const int communicator=UPstream::worldComm, const int tag=UPstream::msgType()) |

| Gather individual values into list locations. | |

| template<class T> | |

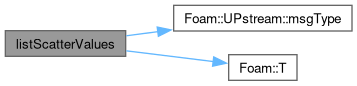

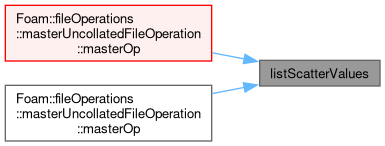

| static T | listScatterValues (const UList< T > &allValues, const int communicator=UPstream::worldComm, const int tag=UPstream::msgType()) |

| Scatter individual values from list locations. | |

| template<class T, class CombineOp> | |

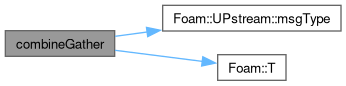

| static void | combineGather (T &value, CombineOp cop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

Forwards to Pstream::gather with an in-place cop. | |

| template<class T, class CombineOp> | |

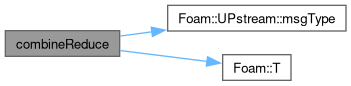

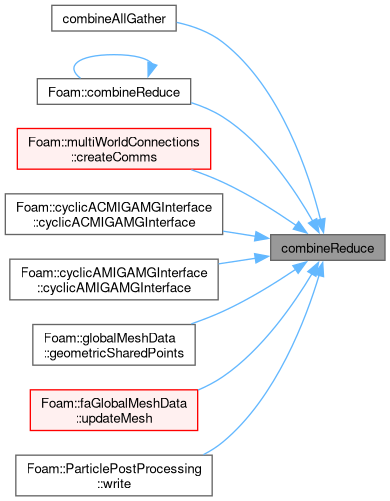

| static void | combineReduce (T &value, CombineOp cop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

Reduce inplace (cf. MPI Allreduce) applying cop to inplace combine value from different processors. | |

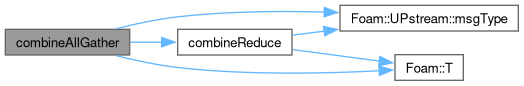

| template<class T, class CombineOp> | |

| static void | combineAllGather (T &value, CombineOp cop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

| Same as Pstream::combineReduce. | |

| template<class T, class BinaryOp, bool InplaceMode> | |

| static void | listGather_algorithm (const UPstream::commsStructList &comms, UList< T > &values, BinaryOp bop, const int tag, const int communicator) |

| Implementation: gather (reduce) list element data onto UPstream::masterNo(). | |

| template<class T, class BinaryOp, bool InplaceMode> | |

| static bool | listGather_topo_algorithm (UList< T > &values, BinaryOp bop, const int tag, const int communicator) |

| Implementation: gather (reduce) list element data onto UPstream::masterNo() using a topo algorithm. | |

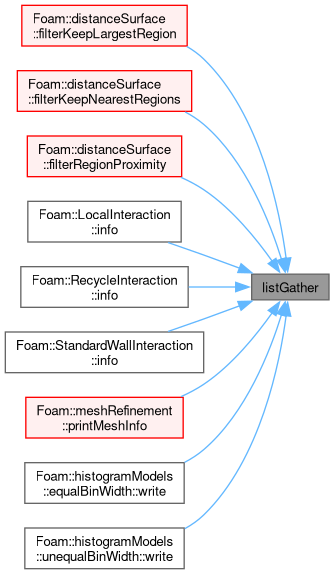

| template<class T, class BinaryOp, bool InplaceMode = false> | |

| static void | listGather (UList< T > &values, BinaryOp bop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

Gather (reduce) list elements, applying bop to each list element. | |

| template<class T, class CombineOp> | |

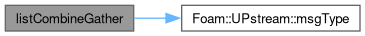

| static void | listCombineGather (UList< T > &values, CombineOp cop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

Forwards to Pstream::listGather with an in-place cop. | |

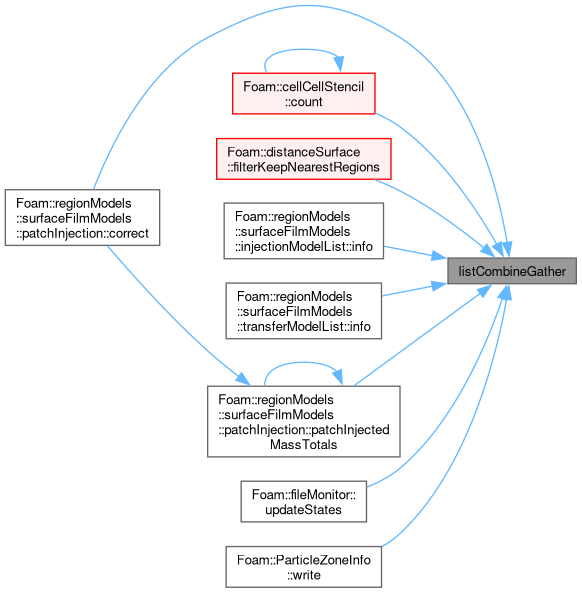

| template<class T, class BinaryOp, bool InplaceMode = false> | |

| static void | listReduce (UList< T > &values, BinaryOp bop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

Reduce list elements (list must be equal size on all ranks), applying bop to each list element. | |

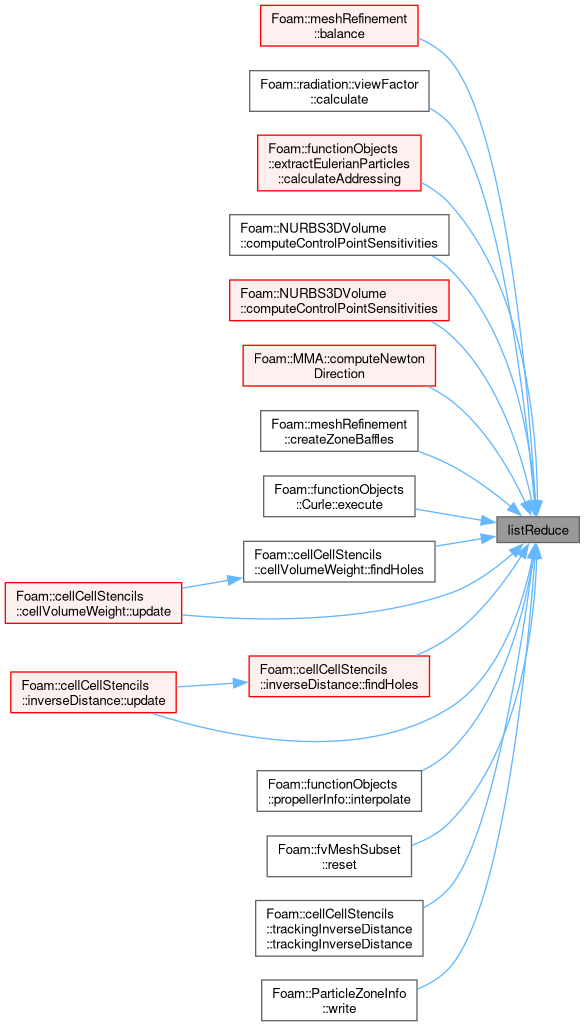

| template<class T, class CombineOp> | |

| static void | listCombineReduce (UList< T > &values, CombineOp cop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

Forwards to Pstream::listReduce with an in-place cop. | |

| template<class T, class CombineOp> | |

| static void | listCombineAllGather (UList< T > &values, CombineOp cop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

| Same as Pstream::listCombineReduce. | |

| template<class Container, class BinaryOp, bool InplaceMode> | |

| static void | mapGather_algorithm (const UPstream::commsStructList &comms, Container &values, BinaryOp bop, const int tag, const int communicator) |

| Implementation: gather (reduce) Map/HashTable containers onto UPstream::masterNo(). | |

| template<class Container, class BinaryOp, bool InplaceMode> | |

| static bool | mapGather_topo_algorithm (Container &values, BinaryOp bop, const int tag, const int communicator) |

| Implementation: gather (reduce) Map/HashTable containers onto UPstream::masterNo() using a topo algorithm. | |

| template<class Container, class BinaryOp, bool InplaceMode = false> | |

| static void | mapGather (Container &values, BinaryOp bop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

Gather (reduce) Map/HashTable containers, applying bop to combine entries from different processors. | |

| template<class Container, class CombineOp> | |

| static void | mapCombineGather (Container &values, CombineOp cop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

Forwards to Pstream::mapGather with an in-place cop. | |

| template<class Container, class BinaryOp, bool InplaceMode = false> | |

| static void | mapReduce (Container &values, BinaryOp bop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

Reduce inplace (cf. MPI Allreduce) applying bop to combine map values from different processors. After completion all processors have the same data. | |

| template<class Container, class CombineOp> | |

| static void | mapCombineReduce (Container &values, CombineOp cop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

Forwards to Pstream::mapReduce with an in-place cop. | |

| template<class Container, class CombineOp> | |

| static void | mapCombineAllGather (Container &values, CombineOp cop, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

| Same as Pstream::mapCombineReduce. | |

| template<class T> | |

| static void | gatherList_algorithm (const UPstream::commsStructList &comms, UList< T > &values, const int tag, const int communicator) |

| Implementation: gather data, keeping individual values separate. Output is only valid (consistent) on UPstream::masterNo(). | |

| template<class T> | |

| static bool | gatherList_topo_algorithm (UList< T > &values, const int tag, const int communicator) |

| Gather data, keeping individual values separate. | |

| template<class T> | |

| static void | scatterList_algorithm (const UPstream::commsStructList &comms, UList< T > &values, const int tag, const int communicator) |

| Implementation: inverse of gatherList_algorithm. | |

| template<class T> | |

| static void | gatherList (UList< T > &values, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

| Gather data, but keep individual values separate. | |

| template<class T> | |

| static void | allGatherList (UList< T > &values, const int tag=UPstream::msgType(), const int communicator=UPstream::worldComm) |

| Gather data, but keep individual values separate. Uses MPI_Allgather or manual communication. | |

| template<class Container> | |

| static void | exchangeSizes (const labelUList &sendProcs, const labelUList &recvProcs, const Container &sendBufs, labelList &sizes, const int tag=UPstream::msgType(), const int comm=UPstream::worldComm) |

| Helper: exchange sizes of sendBufs for specified send/recv ranks. | |

| template<class Container> | |

| static void | exchangeSizes (const labelUList &neighProcs, const Container &sendBufs, labelList &sizes, const int tag=UPstream::msgType(), const int comm=UPstream::worldComm) |

| Helper: exchange sizes of sendBufs for specified neighbour ranks. | |

| template<class Container> | |

| static void | exchangeSizes (const Container &sendBufs, labelList &recvSizes, const int comm=UPstream::worldComm) |

| Helper: exchange sizes of sendBufs. The sendBufs is the data per processor (in the communicator). | |

| template<class Container> | |

| static void | exchangeSizes (const Map< Container > &sendBufs, Map< label > &recvSizes, const int tag=UPstream::msgType(), const int comm=UPstream::worldComm) |

| Exchange the non-zero sizes of sendBufs entries (sparse map) with other ranks in the communicator using non-blocking consensus exchange. | |

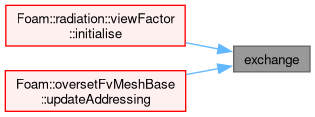

| template<class Container, class Type> | |

| static void | exchange (const UList< Container > &sendBufs, const labelUList &recvSizes, List< Container > &recvBufs, const int tag=UPstream::msgType(), const int comm=UPstream::worldComm, const bool wait=true) |

| Helper: exchange contiguous data. Sends sendBufs, receives into recvBufs using predetermined receive sizing. | |

| template<class Container, class Type> | |

| static void | exchange (const Map< Container > &sendBufs, const Map< label > &recvSizes, Map< Container > &recvBufs, const int tag=UPstream::msgType(), const int comm=UPstream::worldComm, const bool wait=true) |

| Exchange contiguous data. Sends sendBufs, receives into recvBufs. | |

| template<class Container, class Type> | |

| static void | exchange (const UList< Container > &sendBufs, List< Container > &recvBufs, const int tag=UPstream::msgType(), const int comm=UPstream::worldComm, const bool wait=true) |

| Exchange contiguous data. Sends sendBufs, receives into recvBufs. Determines sizes to receive. | |

| template<class Container, class Type> | |

| static void | exchange (const Map< Container > &sendBufs, Map< Container > &recvBufs, const int tag=UPstream::msgType(), const int comm=UPstream::worldComm, const bool wait=true) |

| Exchange contiguous data. Sends sendBufs, receives into recvBufs. Determines sizes to receive. | |

| template<class Container, class Type> | |

| static void | exchangeConsensus (const UList< Container > &sendBufs, List< Container > &recvBufs, const int tag, const int comm, const bool wait=true) |

| Exchange contiguous data using non-blocking consensus (NBX) Sends sendData, receives into recvData. | |

| template<class Container, class Type> | |

| static void | exchangeConsensus (const Map< Container > &sendBufs, Map< Container > &recvBufs, const int tag, const int comm, const bool wait=true) |

| Exchange contiguous data using non-blocking consensus (NBX) Sends sendData, receives into recvData. | |

| template<class Container, class Type> | |

| static Map< Container > | exchangeConsensus (const Map< Container > &sendBufs, const int tag, const int comm, const bool wait=true) |

| Exchange contiguous data using non-blocking consensus (NBX) Sends sendData returns receive information. | |

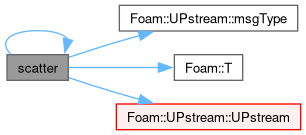

| template<class T> | |

| static void | scatter (T &value, const int tag=UPstream::msgType(), const int comm=UPstream::worldComm) |

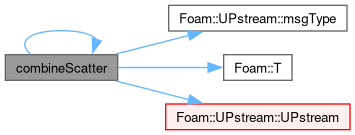

| template<class T> | |

| static void | combineScatter (T &value, const int tag=UPstream::msgType(), const int comm=UPstream::worldComm) |

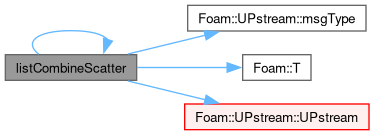

| template<class T> | |

| static void | listCombineScatter (List< T > &value, const int tag=UPstream::msgType(), const int comm=UPstream::worldComm) |

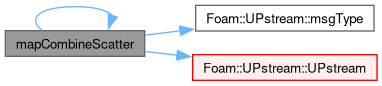

| template<class Container> | |

| static void | mapCombineScatter (Container &values, const int tag=UPstream::msgType(), const int comm=UPstream::worldComm) |

| template<class Type> | |

| static bool | broadcast (Type *buffer, std::streamsize count, const int communicator, const int root=UPstream::masterNo()) |

| Broadcast buffer content to all processes in communicator. | |

| template<class Type, unsigned N> | |

| static bool | broadcast (FixedList< Type, N > &list, const int communicator, const int root=UPstream::masterNo()) |

| Broadcast buffer content to all processes in communicator. | |

| Static Public Member Functions inherited from UPstream | |

| static bool | usingTopoControl (UPstream::topoControls ctrl) noexcept |

| Test for selection of given topology-aware routine. | |

| static constexpr int | commGlobal () noexcept |

| Communicator for all ranks, irrespective of any local worlds. | |

| static constexpr int | commSelf () noexcept |

| Communicator within the current rank only. | |

| static int | commConstWorld () noexcept |

| Communicator for all ranks (respecting any local worlds). | |

| static label | commWorld () noexcept |

| Communicator for all ranks (respecting any local worlds). | |

| static label | commWorld (const label communicator) noexcept |

| Set world communicator. Negative values are a no-op. | |

| static label | commWarn (const label communicator) noexcept |

| Alter communicator debugging setting. Warns for use of any communicator differing from specified. Negative values disable. | |

| static label | nComms () noexcept |

| Number of currently defined communicators. | |

| static void | printCommTree (int communicator, bool linear=false) |

| Debugging: print the communication tree. | |

| static int | commInterNode () noexcept |

| Communicator between nodes/hosts (respects any local worlds). | |

| static int | commLocalNode () noexcept |

| Communicator within the node/host (respects any local worlds). | |

| static bool | hasNodeCommunicators () noexcept |

| Both inter-node and local-node communicators have been created. | |

| static bool | usingNodeComms (const int communicator) |

| True if node topology-aware routines have been enabled, it is running in parallel, the starting point is the world-communicator and it is not an odd corner case (ie, all processes on one node, all processes on different nodes). | |

| static label | newCommunicator (const label parent, const labelRange &subRanks, const bool withComponents=true) |

| Create new communicator with sub-ranks on the parent communicator. | |

| static label | newCommunicator (const label parent, const labelUList &subRanks, const bool withComponents=true) |

| Create new communicator with sub-ranks on the parent communicator. | |

| static label | dupCommunicator (const label parent) |

| Duplicate the parent communicator. | |

| static label | splitCommunicator (const label parent, const int colour, const bool two_step=true) |

| Allocate a new communicator by splitting the parent communicator on the given colour. | |

| static void | freeCommunicator (const label communicator, const bool withComponents=true) |

| Free a previously allocated communicator. | |

| static int | baseProcNo (label comm, int procID) |

| Return physical processor number (i.e. processor number in worldComm) given communicator and processor. | |

| static label | procNo (const label comm, const int baseProcID) |

| Return processor number in communicator (given physical processor number) (= reverse of baseProcNo). | |

| static label | procNo (const label comm, const label currentComm, const int currentProcID) |

| Return processor number in communicator (given processor number and communicator). | |

| static void | addValidParOptions (HashTable< string > &validParOptions) |

| Add the valid option this type of communications library adds/requires on the command line. | |

| static bool | init (int &argc, char **&argv, const bool needsThread) |

| Initialisation function called from main. | |

| static bool | initNull () |

| Special purpose initialisation function. | |

| static void | barrier (const int communicator, UPstream::Request *req=nullptr) |

| Impose a synchronisation barrier (optionally non-blocking). | |

| static void | send_done (const int toProcNo, const int communicator, const int tag=UPstream::msgType()+1970) |

| Impose a point-to-point synchronisation barrier by sending a zero-byte "done" message to given rank. | |

| static int | wait_done (const int fromProcNo, const int communicator, const int tag=UPstream::msgType()+1970) |

| Impose a point-to-point synchronisation barrier by receiving a zero-byte "done" message from given rank. | |

| static std::pair< int, int64_t > | probeMessage (const UPstream::commsTypes commsType, const int fromProcNo, const int tag=UPstream::msgType(), const int communicator=worldComm) |

| Probe for an incoming message. | |

| static void | printNodeCommsControl (Ostream &os) |

| Report the node-communication settings. | |

| static void | printTopoControl (Ostream &os) |

| Report the topology routines settings. | |

| static label | nRequests () noexcept |

| Number of outstanding requests (on the internal list of requests). | |

| static void | resetRequests (const label n) |

| Truncate outstanding requests to given length, which is expected to be in the range [0 to nRequests()]. | |

| static void | addRequest (UPstream::Request &req) |

| Transfer the (wrapped) MPI request to the internal global list and invalidate the parameter (ignores null requests). | |

| static void | cancelRequest (const label i) |

| Non-blocking comms: cancel and free outstanding request. Corresponds to MPI_Cancel() + MPI_Request_free(). | |

| static void | cancelRequest (UPstream::Request &req) |

| Non-blocking comms: cancel and free outstanding request. Corresponds to MPI_Cancel() + MPI_Request_free(). | |

| static void | cancelRequests (UList< UPstream::Request > &requests) |

| Non-blocking comms: cancel and free outstanding requests. Corresponds to MPI_Cancel() + MPI_Request_free(). | |

| static void | removeRequests (label pos, label len=-1) |

| Non-blocking comms: cancel/free outstanding requests (from position onwards) and remove from internal list of requests. Corresponds to MPI_Cancel() + MPI_Request_free(). | |

| static void | freeRequest (UPstream::Request &req) |

| Non-blocking comms: free outstanding request. Corresponds to MPI_Request_free(). | |

| static void | freeRequests (UList< UPstream::Request > &requests) |

| Non-blocking comms: free outstanding requests. Corresponds to MPI_Request_free(). | |

| static void | waitRequests (label pos, label len=-1) |

| Wait until all requests (from position onwards) have finished. Corresponds to MPI_Waitall(). | |

| static void | waitRequests (UList< UPstream::Request > &requests) |

| Wait until all requests have finished. Corresponds to MPI_Waitall(). | |

| static bool | waitAnyRequest (label pos, label len=-1) |

| Wait until any request (from position onwards) has finished. Corresponds to MPI_Waitany(). | |

| static bool | waitSomeRequests (label pos, label len=-1, DynamicList< int > *indices=nullptr) |

| Wait until some requests (from position onwards) have finished. Corresponds to MPI_Waitsome(). | |

| static bool | waitSomeRequests (UList< UPstream::Request > &requests, DynamicList< int > *indices=nullptr) |

| Wait until some requests have finished. Corresponds to MPI_Waitsome(). | |

| static int | waitAnyRequest (UList< UPstream::Request > &requests) |

| Wait until any request has finished and return its index. Corresponds to MPI_Waitany(). | |

| static void | waitRequest (const label i) |

| Wait until request i has finished. Corresponds to MPI_Wait(). | |

| static void | waitRequest (UPstream::Request &req) |

| Wait until specified request has finished. Corresponds to MPI_Wait(). | |

| static bool | activeRequest (const label i) |

Is request i active (!= MPI_REQUEST_NULL)? | |

| static bool | activeRequest (const UPstream::Request &req) |

| Is request active (!= MPI_REQUEST_NULL)? | |

| static bool | finishedRequest (const label i) |

| Non-blocking comms: has request i finished? Corresponds to MPI_Test(). | |

| static bool | finishedRequest (UPstream::Request &req) |

| Non-blocking comms: has request finished? Corresponds to MPI_Test(). | |

| static bool | finishedRequests (label pos, label len=-1) |

| Non-blocking comms: have all requests (from position onwards) finished? Corresponds to MPI_Testall(). | |

| static bool | finishedRequests (UList< UPstream::Request > &requests) |

| Non-blocking comms: have all requests finished? Corresponds to MPI_Testall(). | |

| static bool | finishedRequestPair (label &req0, label &req1) |

| Non-blocking comms: have both requests finished? Corresponds to pair of MPI_Test(). | |

| static void | waitRequestPair (label &req0, label &req1) |

| Non-blocking comms: wait for both requests to finish. Corresponds to pair of MPI_Wait(). | |

| static bool | parRun (const bool on) noexcept |

| Set as parallel run on/off. | |

| static bool & | parRun () noexcept |

| Test if this a parallel run. | |

| static bool | haveThreads () noexcept |

| Have support for threads. | |

| static constexpr int | masterNo () noexcept |

| Relative rank for the master process - is always 0. | |

| static label | nProcs (const label communicator=worldComm) |

| Number of ranks in parallel run (for given communicator). It is 1 for serial run. | |

| static int | myProcNo (const label communicator=worldComm) |

| Rank of this process in the communicator (starting from masterNo()). Negative if the process is not a rank in the communicator. | |

| static bool | master (const label communicator=worldComm) |

| True if process corresponds to the master rank in the communicator. | |

| static bool | is_rank (const label communicator=worldComm) |

| True if process corresponds to any rank (master or sub-rank) in the given communicator. | |

| static bool | is_subrank (const label communicator=worldComm) |

| True if process corresponds to a sub-rank in the given communicator. | |

| static bool | is_parallel (const label communicator=worldComm) |

| True if parallel algorithm or exchange is required. | |

| static int | numNodes () noexcept |

| The number of shared/host nodes in the (const) world communicator. | |

| static label | parent (int communicator) |

| The parent communicator. | |

| static List< int > & | procID (int communicator) |

| The list of ranks within a given communicator. | |

| static bool | sameProcs (int communicator1, int communicator2) |

| Test for communicator equality. | |

| template<typename T1, typename = std::void_t <std::enable_if_t<std::is_integral_v<T1>>>> | |

| static bool | sameProcs (int communicator, const UList< T1 > &procs) |

| Test equality of communicator procs with the given list of ranks. Includes a guard for the communicator index. | |

| template<typename T1, typename T2, typename = std::void_t < std::enable_if_t<std::is_integral_v<T1>>, std::enable_if_t<std::is_integral_v<T2>> >> | |

| static bool | sameProcs (const UList< T1 > &procs1, const UList< T2 > &procs2) |

| Test the equality of two lists of ranks. | |

| static const wordList & | allWorlds () noexcept |

| All worlds. | |

| static const labelList & | worldIDs () noexcept |

| The indices into allWorlds for all processes. | |

| static label | myWorldID () |

| My worldID. | |

| static const word & | myWorld () |

| My world. | |

| static rangeType | allProcs (const label communicator=worldComm) |

| Range of process indices for all processes. | |

| static rangeType | subProcs (const label communicator=worldComm) |

| Range of process indices for sub-processes. | |

| static const List< int > & | interNode_offsets () |

| Processor offsets corresponding to the inter-node communicator. | |

| static const rangeType & | localNode_parentProcs () |

| The range (start/size) of the commLocalNode ranks in terms of the (const) world communicator processors. | |

| static const commsStructList & | linearCommunication (int communicator) |

| Linear communication schedule (special case) for given communicator. | |

| static const commsStructList & | treeCommunication (int communicator) |

| Tree communication schedule (standard case) for given communicator. | |

| static const commsStructList & | whichCommunication (const int communicator, bool linear=false) |

Communication schedule for all-to-master (proc 0) as linear/tree/none with switching based on UPstream::nProcsSimpleSum, the is_parallel() state and the optional linear parameter. | |

| static int & | msgType () noexcept |

| Message tag of standard messages. | |

| static int | msgType (int val) noexcept |

| Set the message tag for standard messages. | |

| static int | incrMsgType (int val=1) noexcept |

| Increment the message tag for standard messages. | |

| static void | shutdown (int errNo=0) |

| Shutdown (finalize) MPI as required. | |

| static void | abort (int errNo=1) |

| Call MPI_Abort with no other checks or cleanup. | |

| static void | exit (int errNo=1) |

| Shutdown (finalize) MPI as required and exit program with errNo. | |

| static void | allToAll (const UList< int32_t > &sendData, UList< int32_t > &recvData, const int communicator=UPstream::worldComm) |

Exchange int32_t data with all ranks in communicator. | |

| static void | allToAllConsensus (const UList< int32_t > &sendData, UList< int32_t > &recvData, const int tag, const int communicator=UPstream::worldComm) |

Exchange non-zero int32_t data between ranks [NBX]. | |

| static void | allToAllConsensus (const Map< int32_t > &sendData, Map< int32_t > &recvData, const int tag, const int communicator=UPstream::worldComm) |

Exchange int32_t data between ranks [NBX]. | |

| static Map< int32_t > | allToAllConsensus (const Map< int32_t > &sendData, const int tag, const int communicator=UPstream::worldComm) |

Exchange int32_t data between ranks [NBX]. | |

| static void | allToAll (const UList< int64_t > &sendData, UList< int64_t > &recvData, const int communicator=UPstream::worldComm) |

Exchange int64_t data with all ranks in communicator. | |

| static void | allToAllConsensus (const UList< int64_t > &sendData, UList< int64_t > &recvData, const int tag, const int communicator=UPstream::worldComm) |

Exchange non-zero int64_t data between ranks [NBX]. | |

| static void | allToAllConsensus (const Map< int64_t > &sendData, Map< int64_t > &recvData, const int tag, const int communicator=UPstream::worldComm) |

Exchange int64_t data between ranks [NBX]. | |

| static Map< int64_t > | allToAllConsensus (const Map< int64_t > &sendData, const int tag, const int communicator=UPstream::worldComm) |

Exchange int64_t data between ranks [NBX]. | |

| template<class Type> | |

| static void | mpiGather (const Type *sendData, Type *recvData, int count, const int communicator=UPstream::worldComm) |

| Receive identically-sized (contiguous) data from all ranks. | |

| template<class Type> | |

| static void | mpiScatter (const Type *sendData, Type *recvData, int count, const int communicator=UPstream::worldComm) |

| Send identically-sized (contiguous) data to all ranks. | |

| template<class Type> | |

| static void | mpiAllGather (Type *allData, int count, const int communicator=UPstream::worldComm) |

| Gather/scatter identically-sized data. | |

| template<class Type> | |

| static void | mpiGatherv (const Type *sendData, int sendCount, Type *recvData, const UList< int > &recvCounts, const UList< int > &recvOffsets, const int communicator=UPstream::worldComm) |

| Receive variable length data from all ranks. | |

| template<class Type> | |

| static void | mpiScatterv (const Type *sendData, const UList< int > &sendCounts, const UList< int > &sendOffsets, Type *recvData, int recvCount, const int communicator=UPstream::worldComm) |

| Send variable length data to all ranks. | |

| template<class T> | |

| static List< T > | allGatherValues (const T &localValue, const int communicator=UPstream::worldComm) |

| Allgather individual values into list locations. | |

| template<class T> | |

| static List< T > | listGatherValues (const T &localValue, const int communicator=UPstream::worldComm) |

| Gather individual values into list locations. | |

| template<class T> | |

| static T | listScatterValues (const UList< T > &allValues, const int communicator=UPstream::worldComm) |

| Scatter individual values from list locations. | |

| template<class Type> | |

| static bool | broadcast (Type *buffer, std::streamsize count, const int communicator, const int root=UPstream::masterNo()) |

| Broadcast buffer contents (contiguous types) to all ranks (default: from rank=0). The sizes must match on all processes. | |

| template<class Type, unsigned N> | |

| static bool | broadcast (FixedList< Type, N > &list, const int communicator, const int root=UPstream::masterNo()) |

| Broadcast fixed-list content (contiguous types) to all ranks (default: from rank=0). The sizes must match on all processes. | |

| template<class T> | |

| static void | mpiReduce (T values[], int count, const UPstream::opCodes opCodeId, const int communicator) |

| MPI_Reduce (blocking) for known operators. | |

| template<UPstream::opCodes opCode, class T> | |

| static void | mpiReduce (T values[], int count, const int communicator) |

| MPI_Reduce (blocking) for known operators. | |

| template<class T> | |

| static void | mpiAllReduce (T values[], int count, const UPstream::opCodes opCodeId, const int communicator) |

| MPI_Allreduce (blocking) for known operators. | |

| template<UPstream::opCodes opCode, class T> | |

| static void | mpiAllReduce (T values[], int count, const int communicator) |

| MPI_Allreduce (blocking) for known operators. | |

| template<class T> | |

| static void | mpiAllReduce (T values[], int count, const UPstream::opCodes opCodeId, const int communicator, UPstream::Request &req) |

| MPI_Iallreduce (non-blocking) for known operators. | |

| template<UPstream::opCodes opCode, class T> | |

| static void | mpiAllReduce (T values[], int count, const int communicator, UPstream::Request &req) |

| MPI_Iallreduce (non-blocking) for known operators. | |

| template<Foam::UPstream::opCodes opCode, class T> | |

| static void | mpiScan (T values[], int count, const int communicator, const bool exclusive=false) |

| Inclusive/exclusive scan (in-place). | |

| template<Foam::UPstream::opCodes opCode, class T> | |

| static T | mpiScan (const T &localValue, const int communicator, const bool exclusive=false) |

| Inclusive/exclusive scan returning the result. In exclusive mode, the degenerate value on rank=0 has no meaning but is treated like non-exclusive mode (ie, original values). | |

| template<class T> | |

| static void | mpiScan_min (T values[], int count, const int communicator, const bool exclusive=false) |

Inclusive/exclusive min scan (in-place). | |

| template<class T> | |

| static void | mpiExscan_min (T values[], int count, const int communicator) |

Exclusive min scan (in-place). | |

| template<class T> | |

| static T | mpiScan_min (const T &value, const int communicator, const bool exclusive=false) |

Inclusive/exclusive min scan returning result. | |

| template<class T> | |

| static T | mpiExscan_min (const T &value, const int communicator) |

Exclusive min scan returning result. | |

| template<class T> | |

| static void | mpiScan_max (T values[], int count, const int communicator, const bool exclusive=false) |

Inclusive/exclusive max scan (in-place). | |

| template<class T> | |

| static void | mpiExscan_max (T values[], int count, const int communicator) |

Exclusive max scan (in-place). | |

| template<class T> | |

| static T | mpiScan_max (const T &value, const int communicator, const bool exclusive=false) |

Inclusive/exclusive max scan returning result. | |

| template<class T> | |

| static T | mpiExscan_max (const T &value, const int communicator) |

Exclusive max scan returning result. | |

| template<class T> | |

| static void | mpiScan_sum (T values[], int count, const int communicator, const bool exclusive=false) |

Inclusive/exclusive sum scan (in-place). | |

| template<class T> | |

| static void | mpiExscan_sum (T values[], int count, const int communicator) |

Exclusive sum scan (in-place). | |

| template<class T> | |

| static T | mpiScan_sum (const T &value, const int communicator, const bool exclusive=false) |

Inclusive/exclusive sum scan returning result. | |

| template<class T> | |

| static T | mpiExscan_sum (const T &value, const int communicator) |

Exclusive sum scan returning result. | |

| static void | reduceAnd (bool &value, const int communicator=worldComm) |

| Logical (and) reduction (MPI_AllReduce). | |

| static void | reduceOr (bool &value, const int communicator=worldComm) |

| Logical (or) reduction (MPI_AllReduce). | |

| static int | find_first (bool condition, int communicator) |

Locate the first rank for which the condition is true, or -1 if no ranks satisfy the condition. | |

| static int | find_last (bool condition, int communicator) |

Locate the last rank for which the condition is true, or -1 if no ranks satisfy the condition. | |

| static label | allocateCommunicator (const label parent, const labelRange &subRanks, const bool withComponents=true) |

| static label | allocateCommunicator (const label parent, const labelUList &subRanks, const bool withComponents=true) |

| static label | commInterHost () noexcept |

| Communicator between nodes (respects any local worlds). | |

| static label | commIntraHost () noexcept |

| Communicator within the node (respects any local worlds). | |

| static void | waitRequests () |

| Wait for all requests to finish. | |

| template<class Type> | |

| static void | gather (const Type *send, int count, Type *recv, const UList< int > &counts, const UList< int > &offsets, const int comm=UPstream::worldComm) |

| template<class Type> | |

| static void | scatter (const Type *send, const UList< int > &counts, const UList< int > &offsets, Type *recv, int count, const int comm=UPstream::worldComm) |

Public Attributes | |

| const int | tag = UPstream::msgType() |

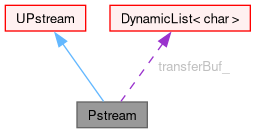

Protected Attributes | |

| DynamicList< char > | transferBuf_ |

| Allocated transfer buffer (can be used for send or receive). | |

Additional Inherited Members | |

| Public Types inherited from UPstream | |

| enum class | commsTypes : char { buffered , scheduled , nonBlocking , blocking = buffered } |

| Communications types. More... | |

| enum class | sendModes : char { normal , sync } |

| Different MPI-send modes (ignored for commsTypes::buffered). More... | |

| enum class | dataTypes : char { Basic_begin , type_byte = Basic_begin , type_int16 , type_int32 , type_int64 , type_uint16 , type_uint32 , type_uint64 , type_float , type_double , type_long_double , Basic_end , User_begin = Basic_end , type_3float = User_begin , type_3double , type_6float , type_6double , type_9float , type_9double , invalid , User_end = invalid , DataTypes_end = invalid } |

| Mapping of some fundamental and aggregate types to MPI data types. More... | |

| enum class | opCodes : char { Basic_begin , op_min = Basic_begin , op_max , op_sum , op_prod , op_bool_and , op_bool_or , op_bool_xor , op_bit_and , op_bit_or , op_bit_xor , Basic_end , Extra_begin = Basic_end , op_replace = Extra_begin , op_no_op , invalid , Extra_end = invalid , OpCodes_end = invalid } |

| Mapping of some MPI op codes. More... | |

| enum class | topoControls : int { broadcast = 1 , reduce = 2 , gather = 4 , combine = 16 , mapGather = 32 , gatherList = 64 } |

| Some bit masks corresponding to topology controls. More... | |

| typedef IntRange< int > | rangeType |

| Int ranges are used for MPI ranks (processes). | |

| Static Public Attributes inherited from UPstream | |

| static const Enum< commsTypes > | commsTypeNames |

| Enumerated names for the communication types. | |

| static int | nodeCommsControl_ |

| Use of host/node topology-aware routines. | |

| static int | nodeCommsMin_ |

| Minimum number of nodes before topology-aware routines are enabled. | |

| static int | topologyControl_ |

| Selection of topology-aware routines as a bitmask combination of the topoControls enumerations. | |

| static bool | floatTransfer |

| Should compact transfer be used in which floats replace doubles reducing the bandwidth requirement at the expense of some loss in accuracy. | |

| static int | nProcsSimpleSum |

| Number of processors to change from linear to tree communication. | |

| static int | nProcsNonblockingExchange |

| Number of processors to change to nonBlocking consensual exchange (NBX). Ignored for zero or negative values. | |

| static int | nPollProcInterfaces |

| Number of polling cycles in processor updates. | |

| static commsTypes | defaultCommsType |

| Default commsType. | |

| static int | maxCommsSize |

| Optional maximum message size (bytes). | |

| static int | tuning_NBX_ |

| Tuning parameters for non-blocking exchange (NBX). | |

| static const int | mpiBufferSize |

| MPI buffer-size (bytes). | |

| static label | worldComm |

| Communicator for all ranks. May differ from commGlobal() if local worlds are in use. | |

| static label | warnComm |

| Debugging: warn for use of any communicator differing from warnComm. | |

| Static Protected Member Functions inherited from UPstream | |

| static bool | mpi_broadcast (void *buf, std::streamsize count, const UPstream::dataTypes dataTypeId, const int communicator, const int root=0) |

| Broadcast buffer contents to all ranks (default: from rank=0). The sizes must match on all processes. | |

| static void | mpi_reduce (void *values, int count, const UPstream::dataTypes dataTypeId, const UPstream::opCodes opCodeId, const int communicator, UPstream::Request *req=nullptr) |

In-place reduction of values with result on rank 0. | |

| static void | mpi_allreduce (void *values, int count, const UPstream::dataTypes dataTypeId, const UPstream::opCodes opCodeId, const int communicator, UPstream::Request *req=nullptr) |

In-place reduction of values with same result on all ranks. | |

| static void | mpi_scan_reduce (void *values, int count, const UPstream::dataTypes dataTypeId, const UPstream::opCodes opCodeId, const int communicator, const bool exclusive) |

In-place scan/exscan reduction of values. | |

| static bool | mpi_send (const UPstream::commsTypes commsType, const void *buf, std::streamsize count, const UPstream::dataTypes dataTypeId, const int toProcNo, const int tag, const int communicator, UPstream::Request *req=nullptr, const UPstream::sendModes sendMode=UPstream::sendModes::normal) |

| Send buffer contents of specified data type to given processor. | |

| static std::streamsize | mpi_receive (const UPstream::commsTypes commsType, void *buf, std::streamsize count, const UPstream::dataTypes dataTypeId, const int fromProcNo, const int tag, const int communicator, UPstream::Request *req=nullptr) |

| Receive buffer contents of specified data type from given processor. | |

| static void | mpi_gather (const void *sendData, void *recvData, int count, const UPstream::dataTypes dataTypeId, const int communicator, UPstream::Request *req=nullptr) |

| Receive identically-sized (contiguous) data from all ranks, placing the result on rank 0. | |

| static void | mpi_scatter (const void *sendData, void *recvData, int count, const UPstream::dataTypes dataTypeId, const int communicator, UPstream::Request *req=nullptr) |

| Send identically-sized (contiguous) data from rank 0 to all other ranks. | |

| static void | mpi_allgather (void *allData, int count, const UPstream::dataTypes dataTypeId, const int communicator, UPstream::Request *req=nullptr) |

| Gather/scatter identically-sized data. | |

| static void | mpi_gatherv (const void *sendData, int sendCount, void *recvData, const UList< int > &recvCounts, const UList< int > &recvOffsets, const UPstream::dataTypes dataTypeId, const int communicator) |

| Receive variable length data from all ranks, placing the result on rank 0. (caution: known to scale poorly). | |

| static void | mpi_scatterv (const void *sendData, const UList< int > &sendCounts, const UList< int > &sendOffsets, void *recvData, int recvCount, const UPstream::dataTypes dataTypeId, const int communicator) |

| Send variable length data from rank 0 to all ranks. (caution: known to scale poorly). | |

Inter-processor communications stream.

|

inlineexplicitnoexcept |

Construct for communication type with empty buffer.

Definition at line 83 of file Pstream.H.

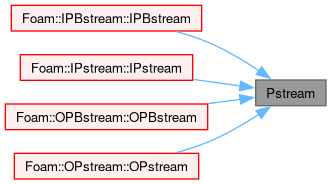

References UPstream::commsType(), and Foam::noexcept.

Referenced by IPBstream::IPBstream(), IPstream::IPstream(), OPBstream::OPBstream(), and OPstream::OPstream().

|

inline |

Construct for communication type with given buffer size.

Definition at line 91 of file Pstream.H.

References UPstream::commsType(), transferBuf_, and UPstream::UPstream().

| ClassName | ( | "Pstream" | ) |

Declare name of the class and its debug switch.

|

static |

Broadcast content (contiguous or non-contiguous) to all communicator ranks. Does nothing in non-parallel.

References UPstream::worldComm.

Referenced by SprayCloud< CloudType >::penetration().

|

static |

Broadcast fixed-list content (contiguous or non-contiguous) to all communicator ranks. Does nothing in non-parallel.

References UPstream::worldComm.

|

static |

Broadcast multiple items to all communicator ranks. Does nothing in non-parallel.

Referenced by regIOobject::addWatch(), surfaceNoise::calculate(), masterUncollatedFileOperation::dirPath(), ensightSurfaceReader::ensightSurfaceReader(), masterUncollatedFileOperation::filePath(), surfaceNoise::initialise(), fvMeshTools::newMesh(), decomposedBlockData::readBlocks(), lumpedPointState::readData(), masterUncollatedFileOperation::readHeader(), masterUncollatedFileOperation::readObjects(), fileOperation::sync(), masterUncollatedFileOperation::sync(), and decomposedBlockData::writeData().

|

static |

Broadcast list content (contiguous or non-contiguous) to all communicator ranks. Does nothing in non-parallel.

For contiguous list data, this avoids serialization overhead, but at the expense of an additional broadcast call.

References UPstream::worldComm.

|

static |

Implementation: gather (reduce) single element data onto UPstream::masterNo().

| comms | Communication order | |

| [in,out] | value |

References Foam::T(), and tag.

|

static |

Implementation: gather (reduce) single element data onto UPstream::masterNo() using a topo algorithm.

| [in,out] | value |

References Foam::T(), and tag.

|

static |

Gather (reduce) data, applying bop to combine value from different processors. The basis for Foam::reduce().

A no-op for non-parallel.

| InplaceMode | indicates that the binary operator modifies values in-place, not using assignment |

| [in,out] | value | the result is only reliable on rank=0 |

References UPstream::msgType(), Foam::T(), tag, and UPstream::worldComm.

|

static |

Gather individual values into list locations.

On master list length == nProcs, otherwise zero length.

For non-parallel : the returned list length is 1 with localValue.

| tag | Only used for non-contiguous types |

References UPstream::msgType(), Foam::T(), tag, and UPstream::worldComm.

Referenced by cyclicACMIGAMGInterface::cyclicACMIGAMGInterface(), and cyclicAMIGAMGInterface::cyclicAMIGAMGInterface().

|

static |

Scatter individual values from list locations.

On master input list length == nProcs, ignored on other procs.

For non-parallel : returns the first list element (or default initialized).

| tag | Only used for non-contiguous types |

References UPstream::msgType(), Foam::T(), tag, and UPstream::worldComm.

Referenced by masterUncollatedFileOperation::masterOp(), and masterUncollatedFileOperation::masterOp().

|

static |

Forwards to Pstream::gather with an in-place cop.

| [in,out] | value | the result is only reliable on rank=0 |

References UPstream::msgType(), Foam::T(), tag, and UPstream::worldComm.

|

static |

Reduce inplace (cf. MPI Allreduce) applying cop to inplace combine value from different processors.

| [in,out] | value | the result is consistent on all ranks |

References UPstream::msgType(), Foam::T(), tag, and UPstream::worldComm.

Referenced by combineAllGather(), Foam::combineReduce(), multiWorldConnections::createComms(), cyclicACMIGAMGInterface::cyclicACMIGAMGInterface(), cyclicAMIGAMGInterface::cyclicAMIGAMGInterface(), globalMeshData::geometricSharedPoints(), faGlobalMeshData::updateMesh(), and ParticlePostProcessing< CloudType >::write().

|

inlinestatic |

Same as Pstream::combineReduce.

| [in,out] | value | the result is consistent on all ranks |

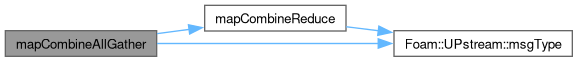

Definition at line 281 of file Pstream.H.

References combineReduce(), UPstream::msgType(), Foam::T(), tag, and UPstream::worldComm.

|

static |

Implementation: gather (reduce) list element data onto UPstream::masterNo().

| comms | Communication order | |

| [in,out] | values |

References tag.

|

static |

Implementation: gather (reduce) list element data onto UPstream::masterNo() using a topo algorithm.

| [in,out] | values |

References tag.

|

static |

Gather (reduce) list elements, applying bop to each list element.

| InplaceMode | indicates that the binary operator modifies values in-place, not using assignment |

| [in,out] | values | the result is only reliable on rank=0 |

References UPstream::msgType(), tag, and UPstream::worldComm.

Referenced by distanceSurface::filterKeepLargestRegion(), distanceSurface::filterKeepNearestRegions(), distanceSurface::filterRegionProximity(), LocalInteraction< CloudType >::info(), RecycleInteraction< CloudType >::info(), StandardWallInteraction< CloudType >::info(), meshRefinement::printMeshInfo(), equalBinWidth::write(), and unequalBinWidth::write().

|

static |

Forwards to Pstream::listGather with an in-place cop.

| [in,out] | values | the result is only reliable on rank=0 |

References UPstream::msgType(), tag, and UPstream::worldComm.

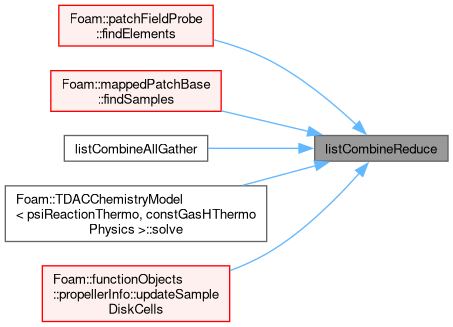

Referenced by patchInjection::correct(), cellCellStencil::count(), distanceSurface::filterKeepNearestRegions(), injectionModelList::info(), transferModelList::info(), patchInjection::patchInjectedMassTotals(), fileMonitor::updateStates(), and ParticleZoneInfo< CloudType >::write().

|

static |

Reduce list elements (list must be equal size on all ranks), applying bop to each list element.

| InplaceMode | indicates that the binary operator modifies values in-place, not using assignment |

| [in,out] | values | the result is consistent on all ranks |

References UPstream::msgType(), tag, and UPstream::worldComm.

Referenced by meshRefinement::balance(), viewFactor::calculate(), extractEulerianParticles::calculateAddressing(), NURBS3DVolume::computeControlPointSensitivities(), NURBS3DVolume::computeControlPointSensitivities(), MMA::computeNewtonDirection(), meshRefinement::createZoneBaffles(), Curle::execute(), cellVolumeWeight::findHoles(), inverseDistance::findHoles(), propellerInfo::interpolate(), fvMeshSubset::reset(), trackingInverseDistance::trackingInverseDistance(), cellVolumeWeight::update(), inverseDistance::update(), and ParticleZoneInfo< CloudType >::write().

|

static |

Forwards to Pstream::listReduce with an in-place cop.

| [in,out] | values | the result is consistent on all ranks |

References UPstream::msgType(), tag, and UPstream::worldComm.

Referenced by patchFieldProbe::findElements(), mappedPatchBase::findSamples(), listCombineAllGather(), TDACChemistryModel< psiReactionThermo, constGasHThermoPhysics >::solve(), and propellerInfo::updateSampleDiskCells().

|

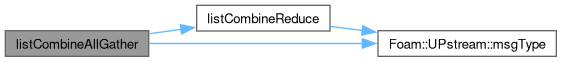

inlinestatic |

Same as Pstream::listCombineReduce.

| [in,out] | values | the result is consistent on all ranks |

Definition at line 393 of file Pstream.H.

References listCombineReduce(), UPstream::msgType(), tag, and UPstream::worldComm.

|

static |

Implementation: gather (reduce) Map/HashTable containers onto UPstream::masterNo().

| comms | Communication order |

References tag.

|

static |

Implementation: gather (reduce) Map/HashTable containers onto UPstream::masterNo() using a topo algorithm.

References tag.

|

static |

Gather (reduce) Map/HashTable containers, applying bop to combine entries from different processors.

| InplaceMode | indicates that the binary operator modifies values in-place, not using assignment |

| [in,out] | values | the result is only reliable on rank=0 |

References UPstream::msgType(), tag, and UPstream::worldComm.

|

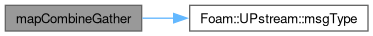

static |

Forwards to Pstream::mapGather with an in-place cop.

| [in,out] | values | the result is only reliable on rank=0 |

References UPstream::msgType(), tag, and UPstream::worldComm.

|

static |

Reduce inplace (cf. MPI Allreduce) applying bop to combine map values from different processors. After completion all processors have the same data.

Wraps mapCombineGather/broadcast (may change in the future).

| [in,out] | values | the result is consistent on all ranks |

References UPstream::msgType(), tag, and UPstream::worldComm.

|

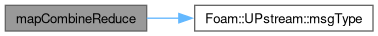

static |

Forwards to Pstream::mapReduce with an in-place cop.

| [in,out] | values | the result is consistent on all ranks |

References UPstream::msgType(), tag, and UPstream::worldComm.

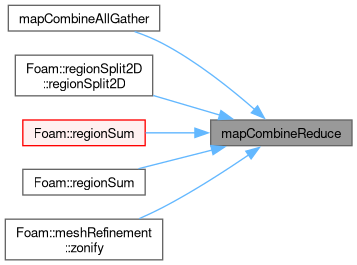

Referenced by mapCombineAllGather(), regionSplit2D::regionSplit2D(), Foam::regionSum(), Foam::regionSum(), and meshRefinement::zonify().

|

inlinestatic |

Same as Pstream::mapCombineReduce.

| [in,out] | values | the result is consistent on all ranks |

Definition at line 504 of file Pstream.H.

References mapCombineReduce(), UPstream::msgType(), tag, and UPstream::worldComm.

|

static |

Implementation: gather data, keeping individual values separate. Output is only valid (consistent) on UPstream::masterNo().

| comms | Communication order | |

| [in,out] | values |

References tag.

|

static |

Gather data, keeping individual values separate.

| [in,out] | values |

References tag.

|

static |

Implementation: inverse of gatherList_algorithm.

| comms | Communication order | |

| [in,out] | values |

References tag.

|

static |

Gather data, but keep individual values separate.

| [in,out] | values |

References UPstream::msgType(), tag, and UPstream::worldComm.

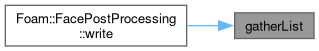

Referenced by FacePostProcessing< CloudType >::write().

|

static |

Gather data, but keep individual values separate. Uses MPI_Allgather or manual communication.

After completion all processors have the same data. Wraps gatherList/scatterList (may change in the future).

| [in,out] | values |

References UPstream::msgType(), tag, and UPstream::worldComm.

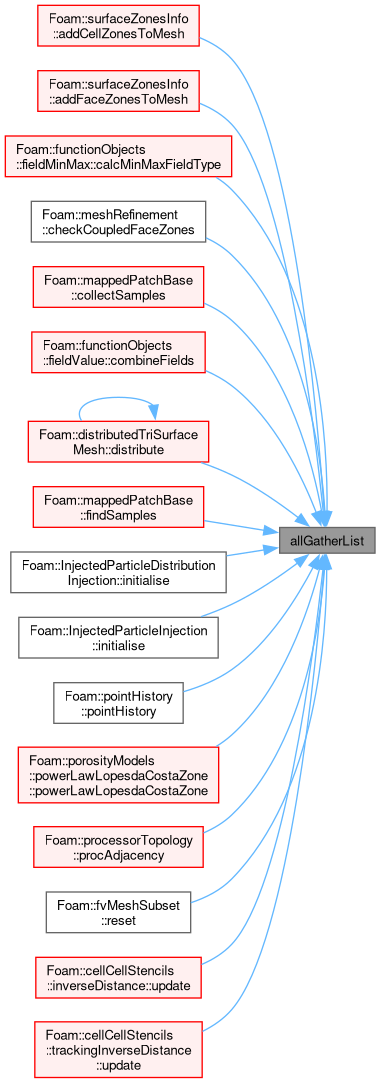

Referenced by surfaceZonesInfo::addCellZonesToMesh(), surfaceZonesInfo::addFaceZonesToMesh(), fieldMinMax::calcMinMaxFieldType(), meshRefinement::checkCoupledFaceZones(), mappedPatchBase::collectSamples(), fieldValue::combineFields(), distributedTriSurfaceMesh::distribute(), mappedPatchBase::findSamples(), InjectedParticleDistributionInjection< CloudType >::initialise(), InjectedParticleInjection< CloudType >::initialise(), pointHistory::pointHistory(), powerLawLopesdaCostaZone::powerLawLopesdaCostaZone(), processorTopology::procAdjacency(), fvMeshSubset::reset(), inverseDistance::update(), and trackingInverseDistance::update().

|

static |

Helper: exchange sizes of sendBufs for specified send/recv ranks.

References UPstream::msgType(), tag, and UPstream::worldComm.

Referenced by mapDistributeBase::mapDistributeBase().

|

static |

Helper: exchange sizes of sendBufs for specified neighbour ranks.

References UPstream::msgType(), tag, and UPstream::worldComm.

|

static |

Helper: exchange sizes of sendBufs. The sendBufs is the data per processor (in the communicator).

Returns sizes of sendBufs on the sending processor.

For non-parallel : copy sizes from sendBufs directly.

References UPstream::worldComm.

|

static |

Exchange the non-zero sizes of sendBufs entries (sparse map) with other ranks in the communicator using non-blocking consensus exchange.

Since the recvData map always cleared before receipt and sizes of zero are never transmitted, a simple check of its keys is sufficient to determine connectivity.

For non-parallel : copy size of rank (if it exists and non-empty) from sendBufs to recvSizes.

References UPstream::msgType(), tag, and UPstream::worldComm.

|

static |

Helper: exchange contiguous data. Sends sendBufs, receives into recvBufs using predetermined receive sizing.

If wait=true will wait for all transfers to finish.

| wait | Wait for requests to complete |

References UPstream::msgType(), tag, and UPstream::worldComm.

Referenced by viewFactor::initialise(), and oversetFvMeshBase::updateAddressing().

|

static |

Exchange contiguous data. Sends sendBufs, receives into recvBufs.

Data provided and received as container.

No internal guards or resizing.

| recvSizes | Num of recv elements (not bytes) |

| wait | Wait for requests to complete |

References UPstream::msgType(), tag, and UPstream::worldComm.

|

static |

Exchange contiguous data. Sends sendBufs, receives into recvBufs. Determines sizes to receive.

If wait=true will wait for all transfers to finish.

| wait | Wait for requests to complete |

References UPstream::msgType(), tag, and UPstream::worldComm.

|

static |

Exchange contiguous data. Sends sendBufs, receives into recvBufs. Determines sizes to receive.

If wait=true will wait for all transfers to finish.

| wait | Wait for requests to complete |

References UPstream::msgType(), tag, and UPstream::worldComm.

|

static |

Exchange contiguous data using non-blocking consensus (NBX) Sends sendData, receives into recvData.

Each entry of the recvBufs list is cleared before receipt. For non-parallel : copy own rank from sendBufs to recvBufs.

| wait | (ignored) |

References tag.

|

static |

Exchange contiguous data using non-blocking consensus (NBX) Sends sendData, receives into recvData.

Each entry of the recvBufs map is cleared before receipt, but the map itself if not cleared. This allows the map to preserve allocated space (eg DynamicList entries) between calls.

For non-parallel : copy own rank (if it exists and non-empty) from sendBufs to recvBufs.

| wait | (ignored) |

References tag.

|

static |

Exchange contiguous data using non-blocking consensus (NBX) Sends sendData returns receive information.

For non-parallel : copy own rank (if it exists and non-empty)

| wait | (ignored) |

References tag.

|

inlinestatic |

| tag | ignored |

Definition at line 807 of file Pstream.H.

References UPstream::broadcast, UPstream::msgType(), scatter(), Foam::T(), tag, UPstream::UPstream(), and UPstream::worldComm.

Referenced by scatter().

|

inlinestatic |

| tag | ignored |

Definition at line 822 of file Pstream.H.

References UPstream::broadcast, combineScatter(), UPstream::msgType(), Foam::T(), tag, UPstream::UPstream(), and UPstream::worldComm.

Referenced by combineScatter().

|

inlinestatic |

| tag | ignored |

Definition at line 837 of file Pstream.H.

References UPstream::broadcast, listCombineScatter(), UPstream::msgType(), Foam::T(), tag, UPstream::UPstream(), and UPstream::worldComm.

Referenced by listCombineScatter().

|

inlinestatic |

| tag | ignored |

Definition at line 852 of file Pstream.H.

References UPstream::broadcast, mapCombineScatter(), UPstream::msgType(), tag, UPstream::UPstream(), and UPstream::worldComm.

Referenced by mapCombineScatter().

| FOAM_DEPRECATED_FOR | ( | 2025- | 03, |

| "broadcast() or broadcastList()" | ) & |

The inverse of gatherList, but when combined with gatherList it effectively acts like a partial broadcast...

[in,out]

References Foam::T().

|

inlinestatic |

Broadcast buffer content to all processes in communicator.

| root | The broadcast root |

|

inlinestatic |

Broadcast buffer content to all processes in communicator.

| root | The broadcast root |

|

protected |

Allocated transfer buffer (can be used for send or receive).

Definition at line 67 of file Pstream.H.

Referenced by IPBstream::IPBstream(), IPstream::IPstream(), OPBstream::OPBstream(), OPstream::OPstream(), and Pstream().

| const int tag = UPstream::msgType() |

Definition at line 874 of file Pstream.H.

Referenced by allGatherList(), combineAllGather(), combineGather(), combineReduce(), combineScatter(), exchange(), exchange(), exchange(), exchange(), exchangeConsensus(), exchangeConsensus(), exchangeConsensus(), exchangeSizes(), exchangeSizes(), exchangeSizes(), gather(), gather_algorithm(), gather_topo_algorithm(), gatherList(), gatherList_algorithm(), gatherList_topo_algorithm(), IPstream::IPstream(), listCombineAllGather(), listCombineGather(), listCombineReduce(), listCombineScatter(), listGather(), listGather_algorithm(), listGather_topo_algorithm(), listGatherValues(), listReduce(), listScatterValues(), mapCombineAllGather(), mapCombineGather(), mapCombineReduce(), mapCombineScatter(), mapGather(), mapGather_algorithm(), mapGather_topo_algorithm(), mapReduce(), OPstream::OPstream(), IPstream::recv(), scatter(), scatterList_algorithm(), OPstream::send(), and OPstream::send().