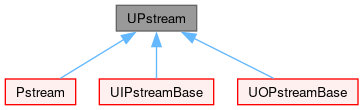

Inter-processor communications stream. More...

#include <UPstream.H>

Classes | |

| class | commsStruct |

| Structure for communicating between processors. More... | |

| class | commsStructList |

| Collection of communication structures. More... | |

| class | communicator |

| Wrapper for internally indexed communicator label. Always invokes UPstream::allocateCommunicatorComponents() and UPstream::freeCommunicatorComponents(). More... | |

| class | Communicator |

An opaque wrapper for MPI_Comm with a vendor-independent representation without any <mpi.h> header. More... | |

| class | Request |

An opaque wrapper for MPI_Request with a vendor-independent representation without any <mpi.h> header. More... | |

| class | File |

An opaque wrapper for MPI_File methods without any <mpi.h> header dependency. More... | |

| class | Window |

An opaque wrapper for MPI_Win with a vendor-independent representation and without any <mpi.h> header dependency. More... | |

Public Types | |

| enum class | commsTypes : char { buffered , scheduled , nonBlocking , blocking = buffered } |

| Communications types. More... | |

| enum class | sendModes : char { normal , sync } |

| Different MPI-send modes (ignored for commsTypes::buffered). More... | |

| enum class | dataTypes : char { Basic_begin , type_byte = Basic_begin , type_int16 , type_int32 , type_int64 , type_uint16 , type_uint32 , type_uint64 , type_float , type_double , type_long_double , Basic_end , User_begin = Basic_end , type_3float = User_begin , type_3double , type_6float , type_6double , type_9float , type_9double , invalid , User_end = invalid , DataTypes_end = invalid } |

| Mapping of some fundamental and aggregate types to MPI data types. More... | |

| enum class | opCodes : char { Basic_begin , op_min = Basic_begin , op_max , op_sum , op_prod , op_bool_and , op_bool_or , op_bool_xor , op_bit_and , op_bit_or , op_bit_xor , Basic_end , Extra_begin = Basic_end , op_replace = Extra_begin , op_no_op , invalid , Extra_end = invalid , OpCodes_end = invalid } |

| Mapping of some MPI op codes. More... | |

| enum class | topoControls : int { broadcast = 1 , reduce = 2 , gather = 4 , combine = 16 , mapGather = 32 , gatherList = 64 } |

| Some bit masks corresponding to topology controls. More... | |

| typedef IntRange< int > | rangeType |

| Int ranges are used for MPI ranks (processes). | |

Public Member Functions | |

| ClassName ("UPstream") | |

| Declare name of the class and its debug switch. | |

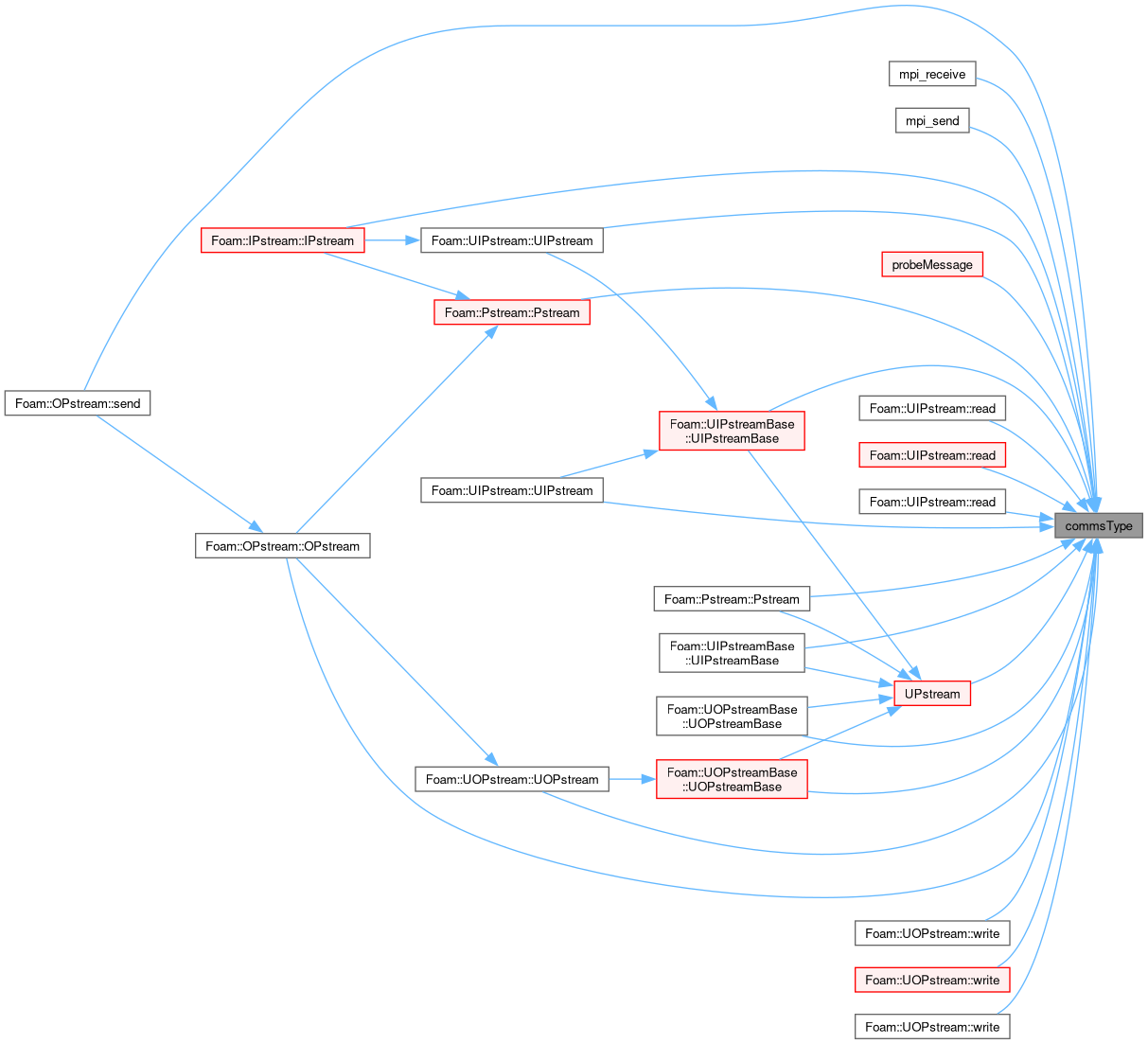

| UPstream (const commsTypes commsType) noexcept | |

| Construct for given communication type. | |

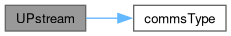

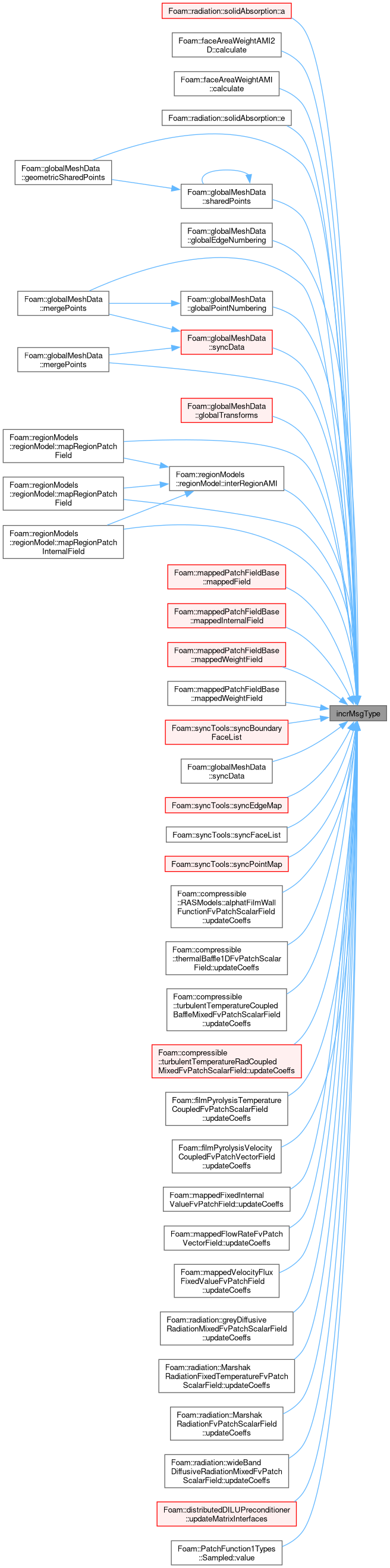

| commsTypes | commsType () const noexcept |

| Get the communications type of the stream. | |

| commsTypes | commsType (const commsTypes ct) noexcept |

| Set the communications type of the stream. | |

Static Public Member Functions | |

| static bool | usingTopoControl (UPstream::topoControls ctrl) noexcept |

| Test for selection of given topology-aware routine. | |

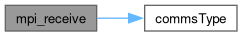

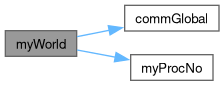

| static constexpr int | commGlobal () noexcept |

| Communicator for all ranks, irrespective of any local worlds. | |

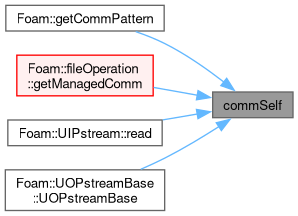

| static constexpr int | commSelf () noexcept |

| Communicator within the current rank only. | |

| static int | commConstWorld () noexcept |

| Communicator for all ranks (respecting any local worlds). | |

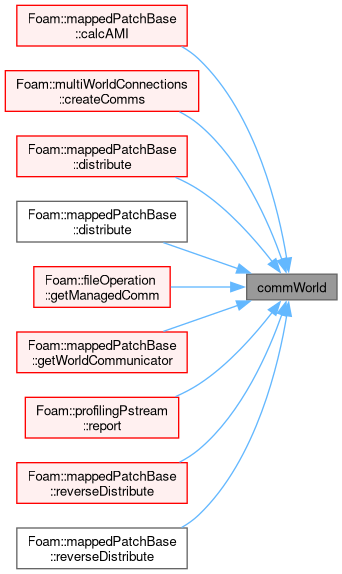

| static label | commWorld () noexcept |

| Communicator for all ranks (respecting any local worlds). | |

| static label | commWorld (const label communicator) noexcept |

| Set world communicator. Negative values are a no-op. | |

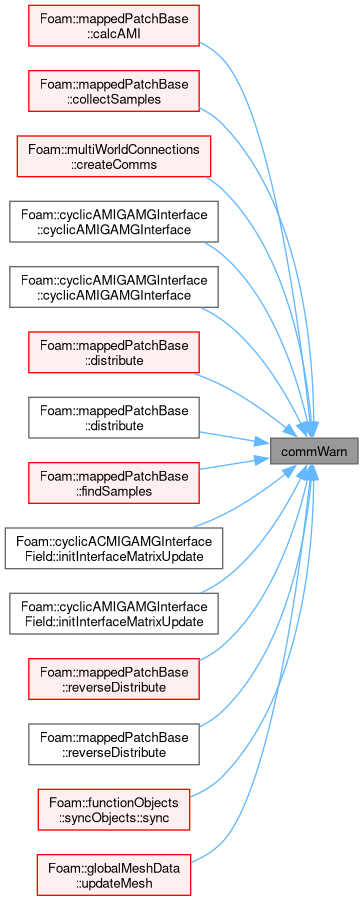

| static label | commWarn (const label communicator) noexcept |

| Alter communicator debugging setting. Warns for use of any communicator differing from specified. Negative values disable. | |

| static label | nComms () noexcept |

| Number of currently defined communicators. | |

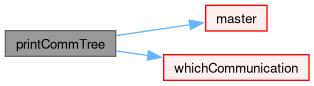

| static void | printCommTree (int communicator, bool linear=false) |

| Debugging: print the communication tree. | |

| static int | commInterNode () noexcept |

| Communicator between nodes/hosts (respects any local worlds). | |

| static int | commLocalNode () noexcept |

| Communicator within the node/host (respects any local worlds). | |

| static bool | hasNodeCommunicators () noexcept |

| Both inter-node and local-node communicators have been created. | |

| static bool | usingNodeComms (const int communicator) |

| True if node topology-aware routines have been enabled, it is running in parallel, the starting point is the world-communicator and it is not an odd corner case (ie, all processes on one node, all processes on different nodes). | |

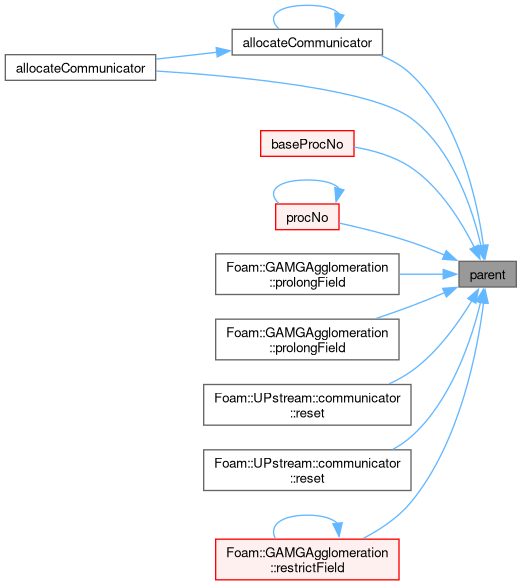

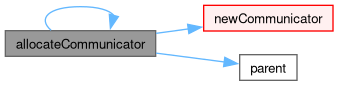

| static label | newCommunicator (const label parent, const labelRange &subRanks, const bool withComponents=true) |

| Create new communicator with sub-ranks on the parent communicator. | |

| static label | newCommunicator (const label parent, const labelUList &subRanks, const bool withComponents=true) |

| Create new communicator with sub-ranks on the parent communicator. | |

| static label | dupCommunicator (const label parent) |

| Duplicate the parent communicator. | |

| static label | splitCommunicator (const label parent, const int colour, const bool two_step=true) |

| Allocate a new communicator by splitting the parent communicator on the given colour. | |

| static void | freeCommunicator (const label communicator, const bool withComponents=true) |

| Free a previously allocated communicator. | |

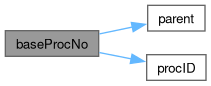

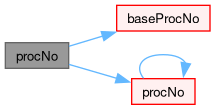

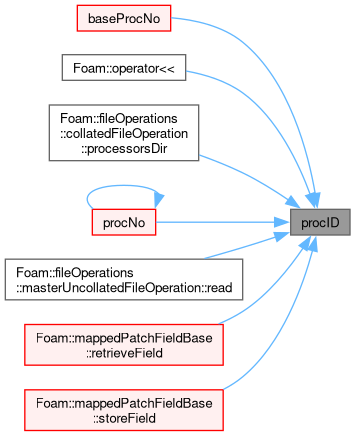

| static int | baseProcNo (label comm, int procID) |

| Return physical processor number (i.e. processor number in worldComm) given communicator and processor. | |

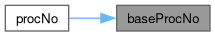

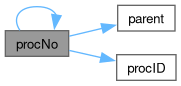

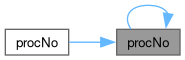

| static label | procNo (const label comm, const int baseProcID) |

| Return processor number in communicator (given physical processor number) (= reverse of baseProcNo). | |

| static label | procNo (const label comm, const label currentComm, const int currentProcID) |

| Return processor number in communicator (given processor number and communicator). | |

| static void | addValidParOptions (HashTable< string > &validParOptions) |

| Add the valid option this type of communications library adds/requires on the command line. | |

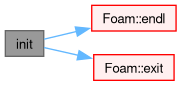

| static bool | init (int &argc, char **&argv, const bool needsThread) |

| Initialisation function called from main. | |

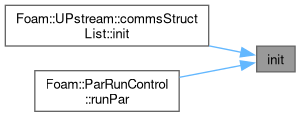

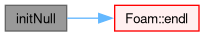

| static bool | initNull () |

| Special purpose initialisation function. | |

| static void | barrier (const int communicator, UPstream::Request *req=nullptr) |

| Impose a synchronisation barrier (optionally non-blocking). | |

| static void | send_done (const int toProcNo, const int communicator, const int tag=UPstream::msgType()+1970) |

| Impose a point-to-point synchronisation barrier by sending a zero-byte "done" message to given rank. | |

| static int | wait_done (const int fromProcNo, const int communicator, const int tag=UPstream::msgType()+1970) |

| Impose a point-to-point synchronisation barrier by receiving a zero-byte "done" message from given rank. | |

| static std::pair< int, int64_t > | probeMessage (const UPstream::commsTypes commsType, const int fromProcNo, const int tag=UPstream::msgType(), const int communicator=worldComm) |

| Probe for an incoming message. | |

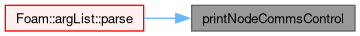

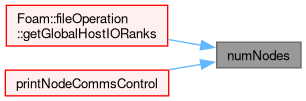

| static void | printNodeCommsControl (Ostream &os) |

| Report the node-communication settings. | |

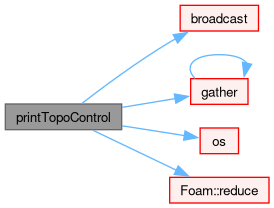

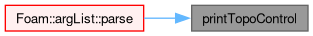

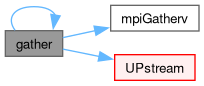

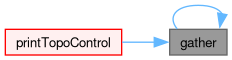

| static void | printTopoControl (Ostream &os) |

| Report the topology routines settings. | |

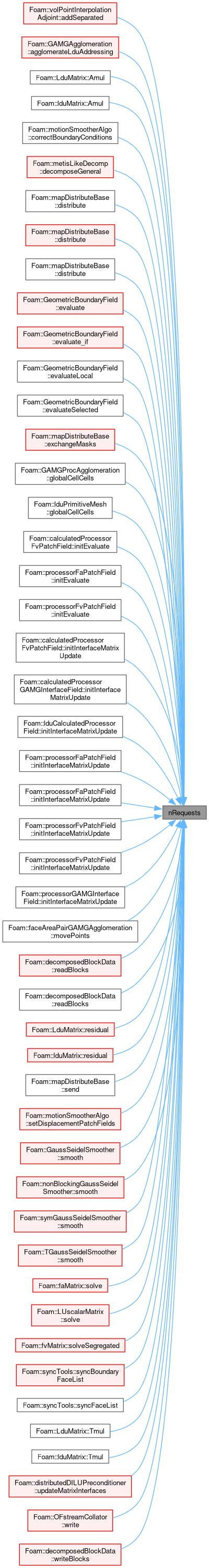

| static label | nRequests () noexcept |

| Number of outstanding requests (on the internal list of requests). | |

| static void | resetRequests (const label n) |

| Truncate outstanding requests to given length, which is expected to be in the range [0 to nRequests()]. | |

| static void | addRequest (UPstream::Request &req) |

| Transfer the (wrapped) MPI request to the internal global list and invalidate the parameter (ignores null requests). | |

| static void | cancelRequest (const label i) |

| Non-blocking comms: cancel and free outstanding request. Corresponds to MPI_Cancel() + MPI_Request_free(). | |

| static void | cancelRequest (UPstream::Request &req) |

| Non-blocking comms: cancel and free outstanding request. Corresponds to MPI_Cancel() + MPI_Request_free(). | |

| static void | cancelRequests (UList< UPstream::Request > &requests) |

| Non-blocking comms: cancel and free outstanding requests. Corresponds to MPI_Cancel() + MPI_Request_free(). | |

| static void | removeRequests (label pos, label len=-1) |

| Non-blocking comms: cancel/free outstanding requests (from position onwards) and remove from internal list of requests. Corresponds to MPI_Cancel() + MPI_Request_free(). | |

| static void | freeRequest (UPstream::Request &req) |

| Non-blocking comms: free outstanding request. Corresponds to MPI_Request_free(). | |

| static void | freeRequests (UList< UPstream::Request > &requests) |

| Non-blocking comms: free outstanding requests. Corresponds to MPI_Request_free(). | |

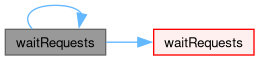

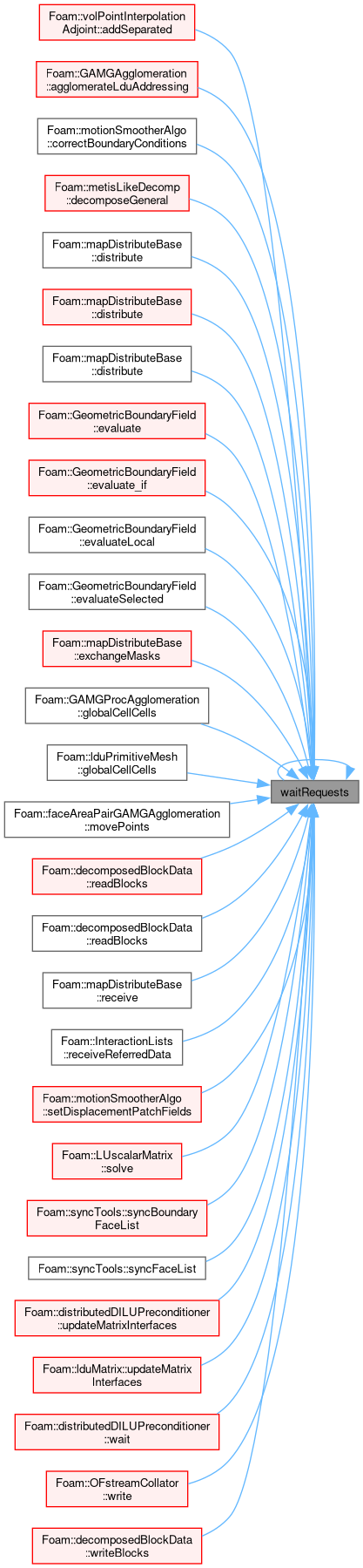

| static void | waitRequests (label pos, label len=-1) |

| Wait until all requests (from position onwards) have finished. Corresponds to MPI_Waitall(). | |

| static void | waitRequests (UList< UPstream::Request > &requests) |

| Wait until all requests have finished. Corresponds to MPI_Waitall(). | |

| static bool | waitAnyRequest (label pos, label len=-1) |

| Wait until any request (from position onwards) has finished. Corresponds to MPI_Waitany(). | |

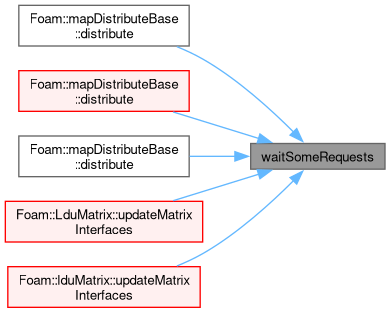

| static bool | waitSomeRequests (label pos, label len=-1, DynamicList< int > *indices=nullptr) |

| Wait until some requests (from position onwards) have finished. Corresponds to MPI_Waitsome(). | |

| static bool | waitSomeRequests (UList< UPstream::Request > &requests, DynamicList< int > *indices=nullptr) |

| Wait until some requests have finished. Corresponds to MPI_Waitsome(). | |

| static int | waitAnyRequest (UList< UPstream::Request > &requests) |

| Wait until any request has finished and return its index. Corresponds to MPI_Waitany(). | |

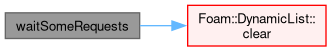

| static void | waitRequest (const label i) |

| Wait until request i has finished. Corresponds to MPI_Wait(). | |

| static void | waitRequest (UPstream::Request &req) |

| Wait until specified request has finished. Corresponds to MPI_Wait(). | |

| static bool | activeRequest (const label i) |

Is request i active (!= MPI_REQUEST_NULL)? | |

| static bool | activeRequest (const UPstream::Request &req) |

| Is request active (!= MPI_REQUEST_NULL)? | |

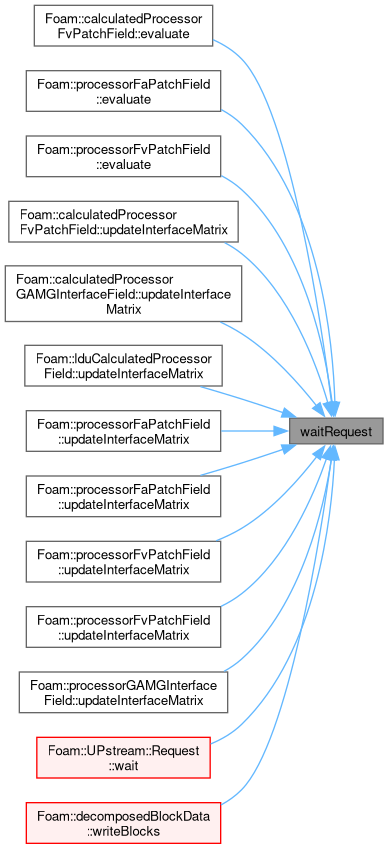

| static bool | finishedRequest (const label i) |

| Non-blocking comms: has request i finished? Corresponds to MPI_Test(). | |

| static bool | finishedRequest (UPstream::Request &req) |

| Non-blocking comms: has request finished? Corresponds to MPI_Test(). | |

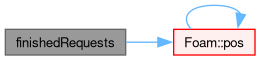

| static bool | finishedRequests (label pos, label len=-1) |

| Non-blocking comms: have all requests (from position onwards) finished? Corresponds to MPI_Testall(). | |

| static bool | finishedRequests (UList< UPstream::Request > &requests) |

| Non-blocking comms: have all requests finished? Corresponds to MPI_Testall(). | |

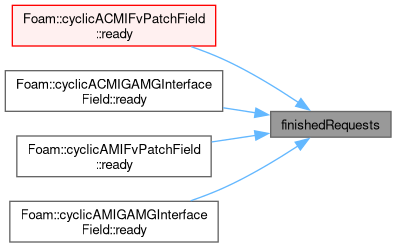

| static bool | finishedRequestPair (label &req0, label &req1) |

| Non-blocking comms: have both requests finished? Corresponds to pair of MPI_Test(). | |

| static void | waitRequestPair (label &req0, label &req1) |

| Non-blocking comms: wait for both requests to finish. Corresponds to pair of MPI_Wait(). | |

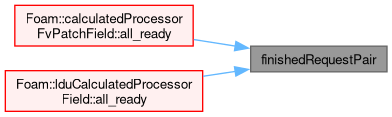

| static bool | parRun (const bool on) noexcept |

| Set as parallel run on/off. | |

| static bool & | parRun () noexcept |

| Test if this a parallel run. | |

| static bool | haveThreads () noexcept |

| Have support for threads. | |

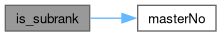

| static constexpr int | masterNo () noexcept |

| Relative rank for the master process - is always 0. | |

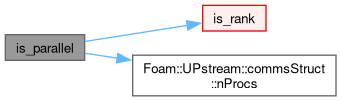

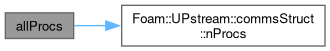

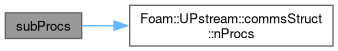

| static label | nProcs (const label communicator=worldComm) |

| Number of ranks in parallel run (for given communicator). It is 1 for serial run. | |

| static int | myProcNo (const label communicator=worldComm) |

| Rank of this process in the communicator (starting from masterNo()). Negative if the process is not a rank in the communicator. | |

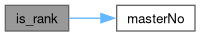

| static bool | master (const label communicator=worldComm) |

| True if process corresponds to the master rank in the communicator. | |

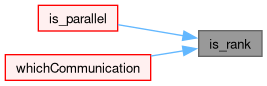

| static bool | is_rank (const label communicator=worldComm) |

| True if process corresponds to any rank (master or sub-rank) in the given communicator. | |

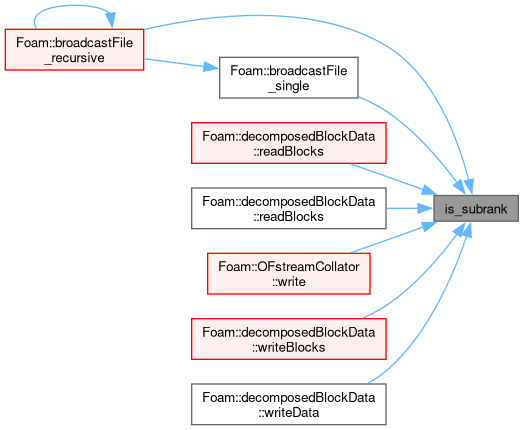

| static bool | is_subrank (const label communicator=worldComm) |

| True if process corresponds to a sub-rank in the given communicator. | |

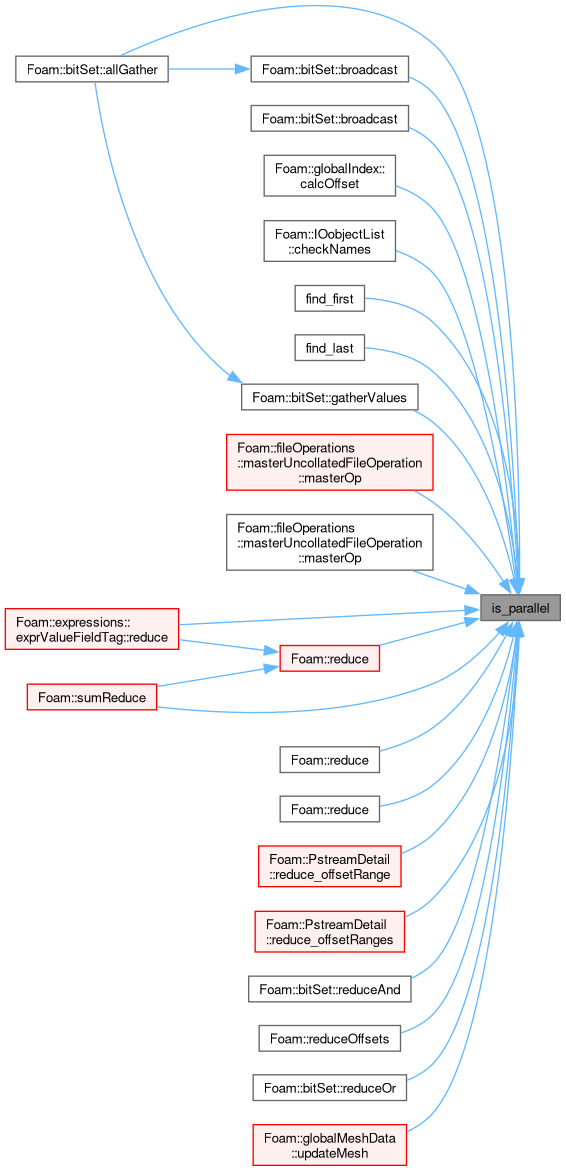

| static bool | is_parallel (const label communicator=worldComm) |

| True if parallel algorithm or exchange is required. | |

| static int | numNodes () noexcept |

| The number of shared/host nodes in the (const) world communicator. | |

| static label | parent (int communicator) |

| The parent communicator. | |

| static List< int > & | procID (int communicator) |

| The list of ranks within a given communicator. | |

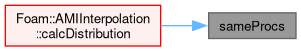

| static bool | sameProcs (int communicator1, int communicator2) |

| Test for communicator equality. | |

| template<typename T1, typename = std::void_t <std::enable_if_t<std::is_integral_v<T1>>>> | |

| static bool | sameProcs (int communicator, const UList< T1 > &procs) |

| Test equality of communicator procs with the given list of ranks. Includes a guard for the communicator index. | |

| template<typename T1, typename T2, typename = std::void_t < std::enable_if_t<std::is_integral_v<T1>>, std::enable_if_t<std::is_integral_v<T2>> >> | |

| static bool | sameProcs (const UList< T1 > &procs1, const UList< T2 > &procs2) |

| Test the equality of two lists of ranks. | |

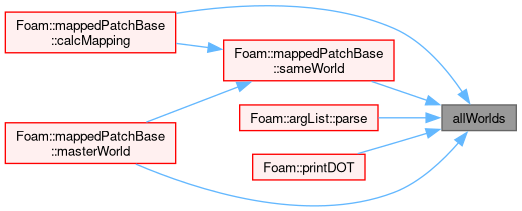

| static const wordList & | allWorlds () noexcept |

| All worlds. | |

| static const labelList & | worldIDs () noexcept |

| The indices into allWorlds for all processes. | |

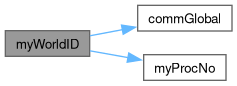

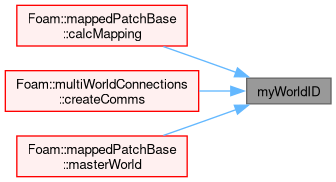

| static label | myWorldID () |

| My worldID. | |

| static const word & | myWorld () |

| My world. | |

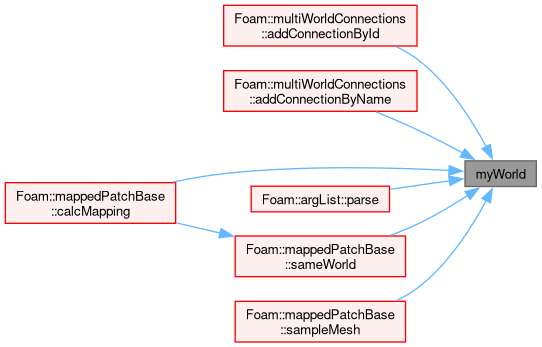

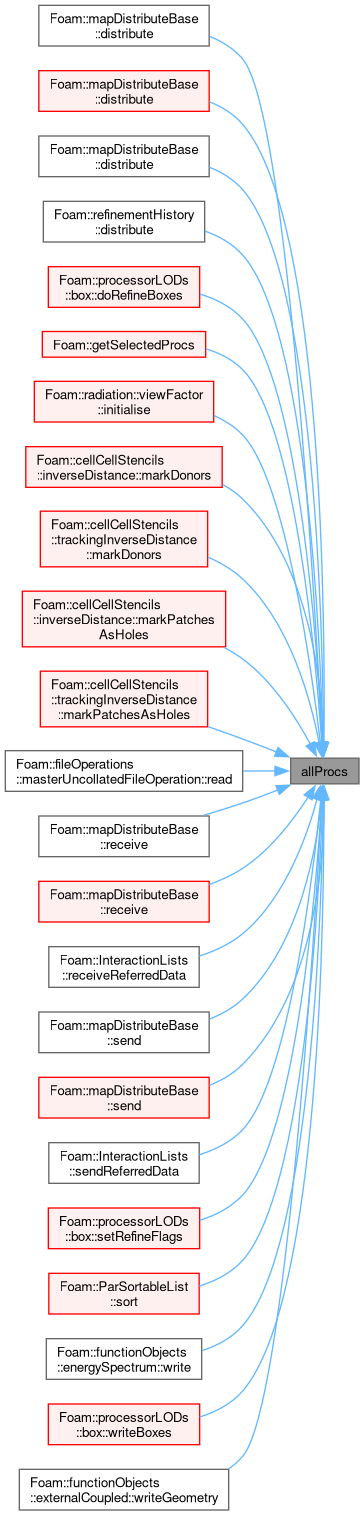

| static rangeType | allProcs (const label communicator=worldComm) |

| Range of process indices for all processes. | |

| static rangeType | subProcs (const label communicator=worldComm) |

| Range of process indices for sub-processes. | |

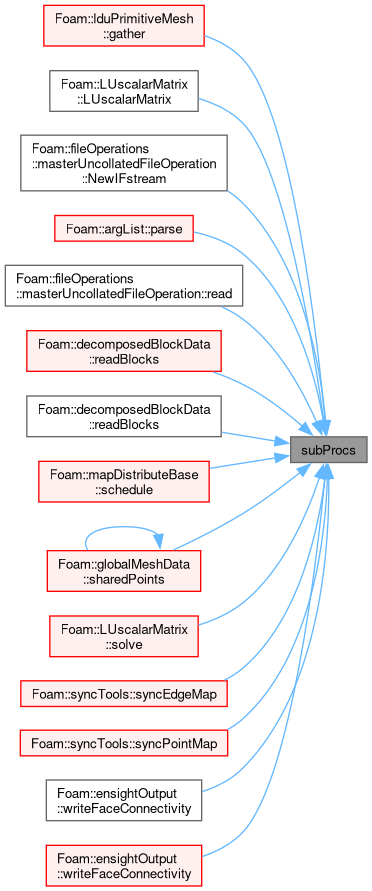

| static const List< int > & | interNode_offsets () |

| Processor offsets corresponding to the inter-node communicator. | |

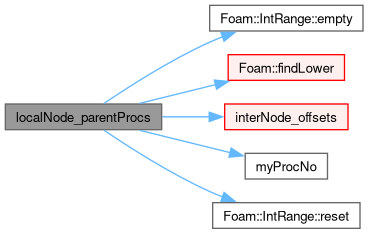

| static const rangeType & | localNode_parentProcs () |

| The range (start/size) of the commLocalNode ranks in terms of the (const) world communicator processors. | |

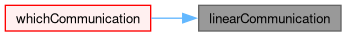

| static const commsStructList & | linearCommunication (int communicator) |

| Linear communication schedule (special case) for given communicator. | |

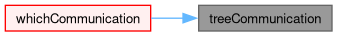

| static const commsStructList & | treeCommunication (int communicator) |

| Tree communication schedule (standard case) for given communicator. | |

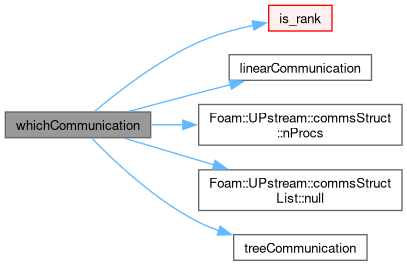

| static const commsStructList & | whichCommunication (const int communicator, bool linear=false) |

Communication schedule for all-to-master (proc 0) as linear/tree/none with switching based on UPstream::nProcsSimpleSum, the is_parallel() state and the optional linear parameter. | |

| static int & | msgType () noexcept |

| Message tag of standard messages. | |

| static int | msgType (int val) noexcept |

| Set the message tag for standard messages. | |

| static int | incrMsgType (int val=1) noexcept |

| Increment the message tag for standard messages. | |

| static void | shutdown (int errNo=0) |

| Shutdown (finalize) MPI as required. | |

| static void | abort (int errNo=1) |

| Call MPI_Abort with no other checks or cleanup. | |

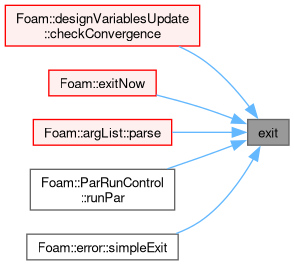

| static void | exit (int errNo=1) |

| Shutdown (finalize) MPI as required and exit program with errNo. | |

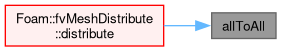

| static void | allToAll (const UList< int32_t > &sendData, UList< int32_t > &recvData, const int communicator=UPstream::worldComm) |

Exchange int32_t data with all ranks in communicator. | |

| static void | allToAllConsensus (const UList< int32_t > &sendData, UList< int32_t > &recvData, const int tag, const int communicator=UPstream::worldComm) |

Exchange non-zero int32_t data between ranks [NBX]. | |

| static void | allToAllConsensus (const Map< int32_t > &sendData, Map< int32_t > &recvData, const int tag, const int communicator=UPstream::worldComm) |

Exchange int32_t data between ranks [NBX]. | |

| static Map< int32_t > | allToAllConsensus (const Map< int32_t > &sendData, const int tag, const int communicator=UPstream::worldComm) |

Exchange int32_t data between ranks [NBX]. | |

| static void | allToAll (const UList< int64_t > &sendData, UList< int64_t > &recvData, const int communicator=UPstream::worldComm) |

Exchange int64_t data with all ranks in communicator. | |

| static void | allToAllConsensus (const UList< int64_t > &sendData, UList< int64_t > &recvData, const int tag, const int communicator=UPstream::worldComm) |

Exchange non-zero int64_t data between ranks [NBX]. | |

| static void | allToAllConsensus (const Map< int64_t > &sendData, Map< int64_t > &recvData, const int tag, const int communicator=UPstream::worldComm) |

Exchange int64_t data between ranks [NBX]. | |

| static Map< int64_t > | allToAllConsensus (const Map< int64_t > &sendData, const int tag, const int communicator=UPstream::worldComm) |

Exchange int64_t data between ranks [NBX]. | |

| template<class Type> | |

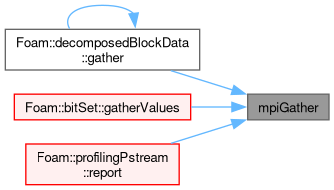

| static void | mpiGather (const Type *sendData, Type *recvData, int count, const int communicator=UPstream::worldComm) |

| Receive identically-sized (contiguous) data from all ranks. | |

| template<class Type> | |

| static void | mpiScatter (const Type *sendData, Type *recvData, int count, const int communicator=UPstream::worldComm) |

| Send identically-sized (contiguous) data to all ranks. | |

| template<class Type> | |

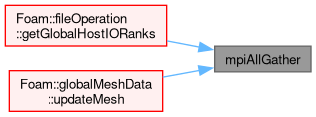

| static void | mpiAllGather (Type *allData, int count, const int communicator=UPstream::worldComm) |

| Gather/scatter identically-sized data. | |

| template<class Type> | |

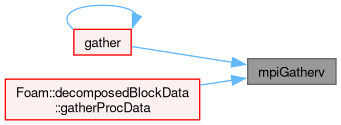

| static void | mpiGatherv (const Type *sendData, int sendCount, Type *recvData, const UList< int > &recvCounts, const UList< int > &recvOffsets, const int communicator=UPstream::worldComm) |

| Receive variable length data from all ranks. | |

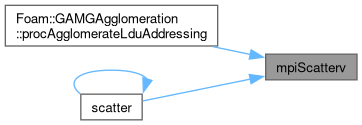

| template<class Type> | |

| static void | mpiScatterv (const Type *sendData, const UList< int > &sendCounts, const UList< int > &sendOffsets, Type *recvData, int recvCount, const int communicator=UPstream::worldComm) |

| Send variable length data to all ranks. | |

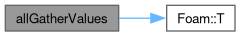

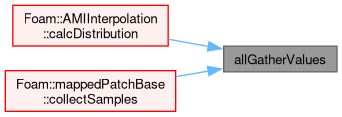

| template<class T> | |

| static List< T > | allGatherValues (const T &localValue, const int communicator=UPstream::worldComm) |

| Allgather individual values into list locations. | |

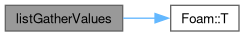

| template<class T> | |

| static List< T > | listGatherValues (const T &localValue, const int communicator=UPstream::worldComm) |

| Gather individual values into list locations. | |

| template<class T> | |

| static T | listScatterValues (const UList< T > &allValues, const int communicator=UPstream::worldComm) |

| Scatter individual values from list locations. | |

| template<class Type> | |

| static bool | broadcast (Type *buffer, std::streamsize count, const int communicator, const int root=UPstream::masterNo()) |

| Broadcast buffer contents (contiguous types) to all ranks (default: from rank=0). The sizes must match on all processes. | |

| template<class Type, unsigned N> | |

| static bool | broadcast (FixedList< Type, N > &list, const int communicator, const int root=UPstream::masterNo()) |

| Broadcast fixed-list content (contiguous types) to all ranks (default: from rank=0). The sizes must match on all processes. | |

| template<class T> | |

| static void | mpiReduce (T values[], int count, const UPstream::opCodes opCodeId, const int communicator) |

| MPI_Reduce (blocking) for known operators. | |

| template<UPstream::opCodes opCode, class T> | |

| static void | mpiReduce (T values[], int count, const int communicator) |

| MPI_Reduce (blocking) for known operators. | |

| template<class T> | |

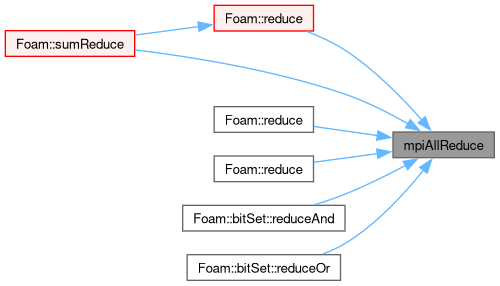

| static void | mpiAllReduce (T values[], int count, const UPstream::opCodes opCodeId, const int communicator) |

| MPI_Allreduce (blocking) for known operators. | |

| template<UPstream::opCodes opCode, class T> | |

| static void | mpiAllReduce (T values[], int count, const int communicator) |

| MPI_Allreduce (blocking) for known operators. | |

| template<class T> | |

| static void | mpiAllReduce (T values[], int count, const UPstream::opCodes opCodeId, const int communicator, UPstream::Request &req) |

| MPI_Iallreduce (non-blocking) for known operators. | |

| template<UPstream::opCodes opCode, class T> | |

| static void | mpiAllReduce (T values[], int count, const int communicator, UPstream::Request &req) |

| MPI_Iallreduce (non-blocking) for known operators. | |

| template<Foam::UPstream::opCodes opCode, class T> | |

| static void | mpiScan (T values[], int count, const int communicator, const bool exclusive=false) |

| Inclusive/exclusive scan (in-place). | |

| template<Foam::UPstream::opCodes opCode, class T> | |

| static T | mpiScan (const T &localValue, const int communicator, const bool exclusive=false) |

| Inclusive/exclusive scan returning the result. In exclusive mode, the degenerate value on rank=0 has no meaning but is treated like non-exclusive mode (ie, original values). | |

| template<class T> | |

| static void | mpiScan_min (T values[], int count, const int communicator, const bool exclusive=false) |

Inclusive/exclusive min scan (in-place). | |

| template<class T> | |

| static void | mpiExscan_min (T values[], int count, const int communicator) |

Exclusive min scan (in-place). | |

| template<class T> | |

| static T | mpiScan_min (const T &value, const int communicator, const bool exclusive=false) |

Inclusive/exclusive min scan returning result. | |

| template<class T> | |

| static T | mpiExscan_min (const T &value, const int communicator) |

Exclusive min scan returning result. | |

| template<class T> | |

| static void | mpiScan_max (T values[], int count, const int communicator, const bool exclusive=false) |

Inclusive/exclusive max scan (in-place). | |

| template<class T> | |

| static void | mpiExscan_max (T values[], int count, const int communicator) |

Exclusive max scan (in-place). | |

| template<class T> | |

| static T | mpiScan_max (const T &value, const int communicator, const bool exclusive=false) |

Inclusive/exclusive max scan returning result. | |

| template<class T> | |

| static T | mpiExscan_max (const T &value, const int communicator) |

Exclusive max scan returning result. | |

| template<class T> | |

| static void | mpiScan_sum (T values[], int count, const int communicator, const bool exclusive=false) |

Inclusive/exclusive sum scan (in-place). | |

| template<class T> | |

| static void | mpiExscan_sum (T values[], int count, const int communicator) |

Exclusive sum scan (in-place). | |

| template<class T> | |

| static T | mpiScan_sum (const T &value, const int communicator, const bool exclusive=false) |

Inclusive/exclusive sum scan returning result. | |

| template<class T> | |

| static T | mpiExscan_sum (const T &value, const int communicator) |

Exclusive sum scan returning result. | |

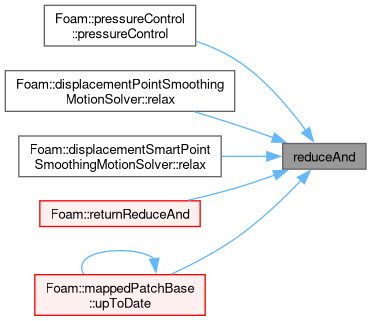

| static void | reduceAnd (bool &value, const int communicator=worldComm) |

| Logical (and) reduction (MPI_AllReduce). | |

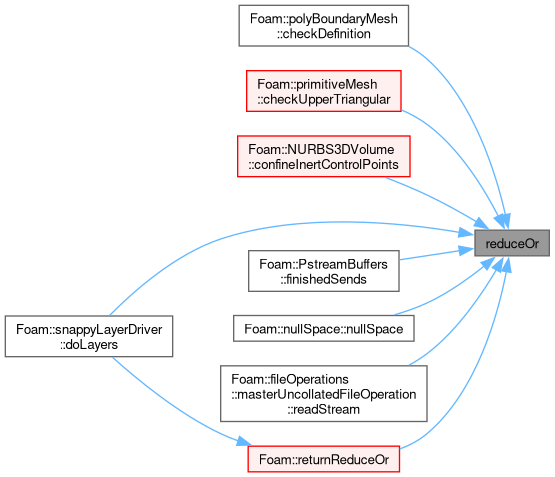

| static void | reduceOr (bool &value, const int communicator=worldComm) |

| Logical (or) reduction (MPI_AllReduce). | |

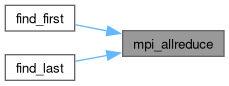

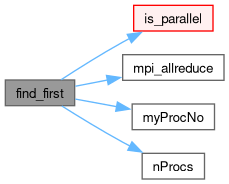

| static int | find_first (bool condition, int communicator) |

Locate the first rank for which the condition is true, or -1 if no ranks satisfy the condition. | |

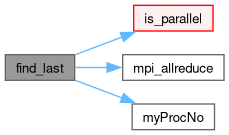

| static int | find_last (bool condition, int communicator) |

Locate the last rank for which the condition is true, or -1 if no ranks satisfy the condition. | |

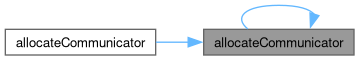

| static label | allocateCommunicator (const label parent, const labelRange &subRanks, const bool withComponents=true) |

| static label | allocateCommunicator (const label parent, const labelUList &subRanks, const bool withComponents=true) |

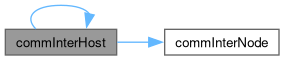

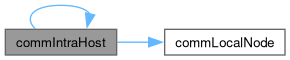

| static label | commInterHost () noexcept |

| Communicator between nodes (respects any local worlds). | |

| static label | commIntraHost () noexcept |

| Communicator within the node (respects any local worlds). | |

| static void | waitRequests () |

| Wait for all requests to finish. | |

| template<class Type> | |

| static void | gather (const Type *send, int count, Type *recv, const UList< int > &counts, const UList< int > &offsets, const int comm=UPstream::worldComm) |

| template<class Type> | |

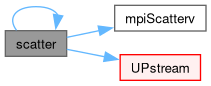

| static void | scatter (const Type *send, const UList< int > &counts, const UList< int > &offsets, Type *recv, int count, const int comm=UPstream::worldComm) |

Static Public Attributes | |

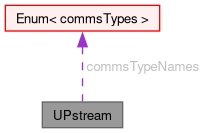

| static const Enum< commsTypes > | commsTypeNames |

| Enumerated names for the communication types. | |

| static int | nodeCommsControl_ |

| Use of host/node topology-aware routines. | |

| static int | nodeCommsMin_ |

| Minimum number of nodes before topology-aware routines are enabled. | |

| static int | topologyControl_ |

| Selection of topology-aware routines as a bitmask combination of the topoControls enumerations. | |

| static bool | floatTransfer |

| Should compact transfer be used in which floats replace doubles reducing the bandwidth requirement at the expense of some loss in accuracy. | |

| static int | nProcsSimpleSum |

| Number of processors to change from linear to tree communication. | |

| static int | nProcsNonblockingExchange |

| Number of processors to change to nonBlocking consensual exchange (NBX). Ignored for zero or negative values. | |

| static int | nPollProcInterfaces |

| Number of polling cycles in processor updates. | |

| static commsTypes | defaultCommsType |

| Default commsType. | |

| static int | maxCommsSize |

| Optional maximum message size (bytes). | |

| static int | tuning_NBX_ |

| Tuning parameters for non-blocking exchange (NBX). | |

| static const int | mpiBufferSize |

| MPI buffer-size (bytes). | |

| static label | worldComm |

| Communicator for all ranks. May differ from commGlobal() if local worlds are in use. | |

| static label | warnComm |

| Debugging: warn for use of any communicator differing from warnComm. | |

Static Protected Member Functions | |

| static bool | mpi_broadcast (void *buf, std::streamsize count, const UPstream::dataTypes dataTypeId, const int communicator, const int root=0) |

| Broadcast buffer contents to all ranks (default: from rank=0). The sizes must match on all processes. | |

| static void | mpi_reduce (void *values, int count, const UPstream::dataTypes dataTypeId, const UPstream::opCodes opCodeId, const int communicator, UPstream::Request *req=nullptr) |

In-place reduction of values with result on rank 0. | |

| static void | mpi_allreduce (void *values, int count, const UPstream::dataTypes dataTypeId, const UPstream::opCodes opCodeId, const int communicator, UPstream::Request *req=nullptr) |

In-place reduction of values with same result on all ranks. | |

| static void | mpi_scan_reduce (void *values, int count, const UPstream::dataTypes dataTypeId, const UPstream::opCodes opCodeId, const int communicator, const bool exclusive) |

In-place scan/exscan reduction of values. | |

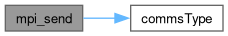

| static bool | mpi_send (const UPstream::commsTypes commsType, const void *buf, std::streamsize count, const UPstream::dataTypes dataTypeId, const int toProcNo, const int tag, const int communicator, UPstream::Request *req=nullptr, const UPstream::sendModes sendMode=UPstream::sendModes::normal) |

| Send buffer contents of specified data type to given processor. | |

| static std::streamsize | mpi_receive (const UPstream::commsTypes commsType, void *buf, std::streamsize count, const UPstream::dataTypes dataTypeId, const int fromProcNo, const int tag, const int communicator, UPstream::Request *req=nullptr) |

| Receive buffer contents of specified data type from given processor. | |

| static void | mpi_gather (const void *sendData, void *recvData, int count, const UPstream::dataTypes dataTypeId, const int communicator, UPstream::Request *req=nullptr) |

| Receive identically-sized (contiguous) data from all ranks, placing the result on rank 0. | |

| static void | mpi_scatter (const void *sendData, void *recvData, int count, const UPstream::dataTypes dataTypeId, const int communicator, UPstream::Request *req=nullptr) |

| Send identically-sized (contiguous) data from rank 0 to all other ranks. | |

| static void | mpi_allgather (void *allData, int count, const UPstream::dataTypes dataTypeId, const int communicator, UPstream::Request *req=nullptr) |

| Gather/scatter identically-sized data. | |

| static void | mpi_gatherv (const void *sendData, int sendCount, void *recvData, const UList< int > &recvCounts, const UList< int > &recvOffsets, const UPstream::dataTypes dataTypeId, const int communicator) |

| Receive variable length data from all ranks, placing the result on rank 0. (caution: known to scale poorly). | |

| static void | mpi_scatterv (const void *sendData, const UList< int > &sendCounts, const UList< int > &sendOffsets, void *recvData, int recvCount, const UPstream::dataTypes dataTypeId, const int communicator) |

| Send variable length data from rank 0 to all ranks. (caution: known to scale poorly). | |

Inter-processor communications stream.

Definition at line 68 of file UPstream.H.

Int ranges are used for MPI ranks (processes).

Definition at line 75 of file UPstream.H.

|

strong |

Communications types.

Definition at line 80 of file UPstream.H.

|

strong |

Different MPI-send modes (ignored for commsTypes::buffered).

| Enumerator | |

|---|---|

| normal | (MPI_Send, MPI_Isend) |

| sync | (MPI_Ssend, MPI_Issend) |

Definition at line 97 of file UPstream.H.

|

strong |

Mapping of some fundamental and aggregate types to MPI data types.

| Enumerator | |

|---|---|

| Basic_begin | (internal use) begin marker [basic/all types] |

| type_byte | byte, char, unsigned char, ... |

| type_int16 | |

| type_int32 | |

| type_int64 | |

| type_uint16 | |

| type_uint32 | |

| type_uint64 | |

| type_float | |

| type_double | |

| type_long_double | |

| Basic_end | (internal use) end marker [basic types] |

| User_begin | (internal use) begin marker [user types] |

| type_3float | 3*float (eg, floatVector) |

| type_3double | 3*double (eg, doubleVector) |

| type_6float | 6*float (eg, floatSymmTensor, complex vector) |

| type_6double | 6*double (eg, doubleSymmTensor, complex vector) |

| type_9float | 9*float (eg, floatTensor) |

| type_9double | 9*double (eg, doubleTensor) |

| invalid | invalid type (NULL) |

| User_end | (internal use) end marker [user types] |

| DataTypes_end | (internal use) end marker [all types] |

Definition at line 106 of file UPstream.H.

|

strong |

Mapping of some MPI op codes.

Currently excluding min/max location until they are needed

Definition at line 148 of file UPstream.H.

|

strong |

Some bit masks corresponding to topology controls.

These selectively enable topology-aware handling

Definition at line 187 of file UPstream.H.

|

inlineexplicitnoexcept |

Construct for given communication type.

Definition at line 1184 of file UPstream.H.

References commsType(), and Foam::noexcept.

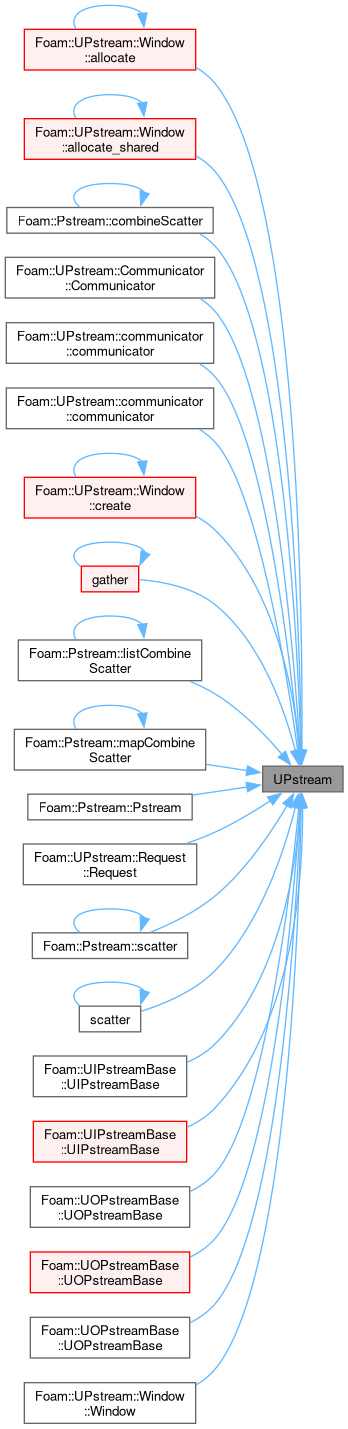

Referenced by UPstream::Window::allocate(), UPstream::Window::allocate_shared(), Pstream::combineScatter(), UPstream::Communicator::Communicator(), UPstream::communicator::communicator(), UPstream::communicator::communicator(), UPstream::Window::create(), gather(), Pstream::listCombineScatter(), Pstream::mapCombineScatter(), Pstream::Pstream(), UPstream::Request::Request(), Pstream::scatter(), scatter(), UIPstreamBase::UIPstreamBase(), UIPstreamBase::UIPstreamBase(), UOPstreamBase::UOPstreamBase(), UOPstreamBase::UOPstreamBase(), UOPstreamBase::UOPstreamBase(), and UPstream::Window::Window().

|

staticprotected |

Broadcast buffer contents to all ranks (default: from rank=0). The sizes must match on all processes.

For non-parallel : do nothing.

void pointer and the required data type (as per MPI). This means it should almost never be called directly but always via a compile-time checked caller. | root | The broadcast root (usually 0 == master) |

Definition at line 25 of file UPstreamBroadcast.C.

|

staticprotected |

In-place reduction of values with result on rank 0.

Includes internal parallel guard and checks on data types, opcode.

void pointer and the required data type (as per MPI). This means it should almost never be called directly but always via a compile-time checked caller. | [out] | req | request information (for non-blocking) |

Definition at line 56 of file UPstreamReduce.C.

|

staticprotected |

In-place reduction of values with same result on all ranks.

Includes internal parallel guard and checks on data types, opcode.

void pointer and the required data type (as per MPI). This means it should almost never be called directly but always via a compile-time checked caller. | [out] | req | request information (for non-blocking) |

Definition at line 68 of file UPstreamReduce.C.

Referenced by find_first(), and find_last().

|

staticprotected |

In-place scan/exscan reduction of values.

Includes internal parallel guard and checks on data types, opcode.

void pointer and the required data type (as per MPI). This means it should almost never be called directly but always via a compile-time checked caller. | exclusive | Use exclusive scan |

Definition at line 80 of file UPstreamReduce.C.

|

staticprotected |

Send buffer contents of specified data type to given processor.

void pointer and the required data type (as per MPI). This means it should almost never be called directly but always via a compile-time checked caller. | [out] | req | request information (for non-blocking) |

| sendMode | optional send mode (normal | sync) |

Definition at line 35 of file UOPstreamWrite.C.

References commsType(), and NotImplemented.

|

staticprotected |

Receive buffer contents of specified data type from given processor.

void pointer and the required data type (as per MPI). This means it should almost never be called directly but always via a compile-time checked caller. The commsType will be ignored if UPstream::Request is specified.| [out] | req | request information (for non-blocking) |

Definition at line 26 of file UIPstreamRead.C.

References commsType(), and NotImplemented.

|

staticprotected |

Receive identically-sized (contiguous) data from all ranks, placing the result on rank 0.

Includes internal parallel guard. For non-parallel, does not copy any data. If needed, this must be done by the caller.

| sendData | All ranks: location of individual value to send | |

| recvData | Master: receive buffer with all values. Other ranks: ignored | |

| count | Number of send/recv data per rank. Globally consistent! | |

| [out] | req | request information (for non-blocking) |

Definition at line 25 of file UPstreamGatherScatter.C.

|

staticprotected |

Send identically-sized (contiguous) data from rank 0 to all other ranks.

Includes internal parallel guard.

| sendData | Master: send buffer with all values. Other ranks: ignored | |

| recvData | All ranks: location to receive individual value | |

| count | Number of send/recv data per rank. Globally consistent! | |

| [out] | req | request information (for non-blocking) |

Definition at line 38 of file UPstreamGatherScatter.C.

|

staticprotected |

Gather/scatter identically-sized data.

Send data from proc slot, receive into all slots

| allData | All ranks: the base of the data locations | |

| count | Number of send/recv data per rank. Globally consistent! | |

| [out] | req | request information (for non-blocking) |

Definition at line 51 of file UPstreamGatherScatter.C.

|

staticprotected |

Receive variable length data from all ranks, placing the result on rank 0. (caution: known to scale poorly).

| sendCount | Ignored on master if recvCount[0] == 0 |

| recvData | Ignored on non-root rank |

| recvCounts | Ignored on non-root rank |

| recvOffsets | Ignored on non-root rank |

Definition at line 65 of file UPstreamGatherScatter.C.

|

staticprotected |

Send variable length data from rank 0 to all ranks. (caution: known to scale poorly).

| sendData | Ignored on non-root rank |

| sendCounts | Ignored on non-root rank |

| sendOffsets | Ignored on non-root rank |

Definition at line 79 of file UPstreamGatherScatter.C.

| ClassName | ( | "UPstream" | ) |

Declare name of the class and its debug switch.

|

inlinestaticnoexcept |

Test for selection of given topology-aware routine.

Definition at line 1014 of file UPstream.H.

References topologyControl_.

|

inlinestaticconstexprnoexcept |

Communicator for all ranks, irrespective of any local worlds.

This value never changes during a simulation.

Definition at line 1081 of file UPstream.H.

References Foam::noexcept.

Referenced by multiWorldConnections::createComms(), fileOperation::getManagedComm(), myWorld(), myWorldID(), and syncObjects::sync().

|

inlinestaticconstexprnoexcept |

Communicator within the current rank only.

This value never changes during a simulation.

Definition at line 1088 of file UPstream.H.

References Foam::noexcept.

Referenced by Foam::getCommPattern(), fileOperation::getManagedComm(), UIPstream::read(), and UOPstreamBase::UOPstreamBase().

|

inlinestaticnoexcept |

Communicator for all ranks (respecting any local worlds).

This value never changes after startup. Unlike the commWorld() which can be temporarily overriden.

Definition at line 1096 of file UPstream.H.

References Foam::noexcept.

|

inlinestaticnoexcept |

Communicator for all ranks (respecting any local worlds).

Definition at line 1101 of file UPstream.H.

References Foam::noexcept, and worldComm.

Referenced by mappedPatchBase::calcAMI(), multiWorldConnections::createComms(), mappedPatchBase::distribute(), mappedPatchBase::distribute(), fileOperation::getManagedComm(), mappedPatchBase::getWorldCommunicator(), profilingPstream::report(), mappedPatchBase::reverseDistribute(), and mappedPatchBase::reverseDistribute().

|

inlinestaticnoexcept |

Set world communicator. Negative values are a no-op.

Definition at line 1108 of file UPstream.H.

References worldComm.

|

inlinestaticnoexcept |

Alter communicator debugging setting. Warns for use of any communicator differing from specified. Negative values disable.

Definition at line 1122 of file UPstream.H.

References warnComm.

Referenced by mappedPatchBase::calcAMI(), mappedPatchBase::collectSamples(), multiWorldConnections::createComms(), cyclicAMIGAMGInterface::cyclicAMIGAMGInterface(), cyclicAMIGAMGInterface::cyclicAMIGAMGInterface(), mappedPatchBase::distribute(), mappedPatchBase::distribute(), mappedPatchBase::findSamples(), cyclicACMIGAMGInterfaceField::initInterfaceMatrixUpdate(), cyclicAMIGAMGInterfaceField::initInterfaceMatrixUpdate(), mappedPatchBase::reverseDistribute(), mappedPatchBase::reverseDistribute(), syncObjects::sync(), and globalMeshData::updateMesh().

|

inlinestaticnoexcept |

Number of currently defined communicators.

Definition at line 1132 of file UPstream.H.

References Foam::noexcept.

|

static |

Debugging: print the communication tree.

Definition at line 736 of file UPstream.C.

References Foam::Info, master(), and whichCommunication().

|

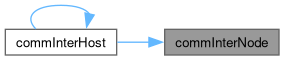

inlinestaticnoexcept |

Communicator between nodes/hosts (respects any local worlds).

Definition at line 1145 of file UPstream.H.

References Foam::noexcept.

Referenced by commInterHost().

|

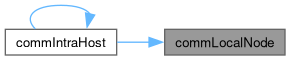

inlinestaticnoexcept |

Communicator within the node/host (respects any local worlds).

Definition at line 1153 of file UPstream.H.

References Foam::noexcept.

Referenced by commIntraHost().

|

inlinestaticnoexcept |

Both inter-node and local-node communicators have been created.

Definition at line 1161 of file UPstream.H.

References Foam::noexcept.

|

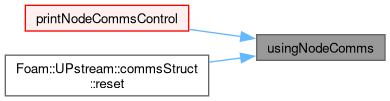

static |

True if node topology-aware routines have been enabled, it is running in parallel, the starting point is the world-communicator and it is not an odd corner case (ie, all processes on one node, all processes on different nodes).

Definition at line 751 of file UPstream.C.

References nodeCommsControl_, and nodeCommsMin_.

Referenced by printNodeCommsControl(), and UPstream::commsStruct::reset().

|

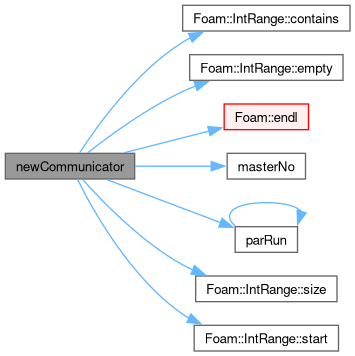

static |

Create new communicator with sub-ranks on the parent communicator.

| parent | The parent communicator |

| subRanks | The contiguous sub-ranks of parent to use |

| withComponents | Call allocateCommunicatorComponents() |

Definition at line 271 of file UPstream.C.

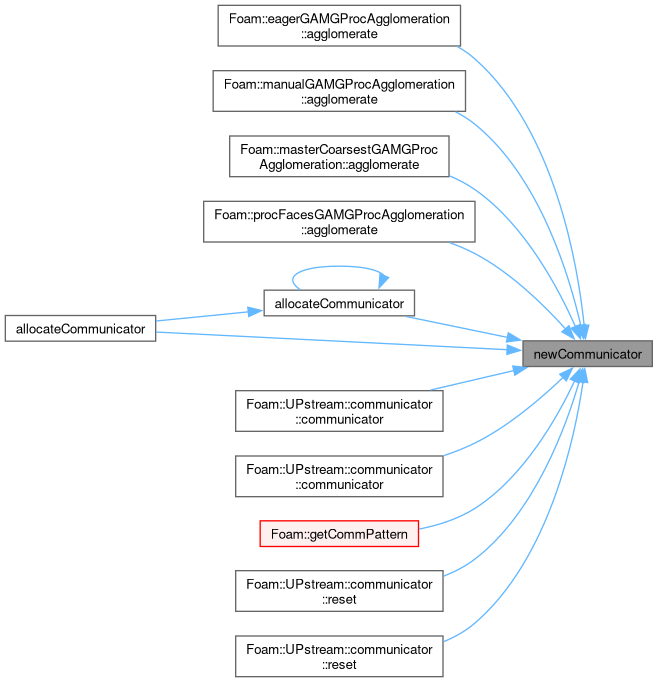

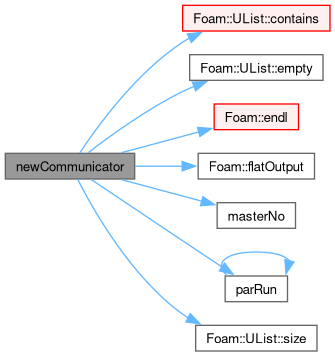

References IntRange< IntType >::contains(), IntRange< IntType >::empty(), Foam::endl(), masterNo(), Foam::nl, parRun(), Foam::Perr, IntRange< IntType >::size(), and IntRange< IntType >::start().

Referenced by eagerGAMGProcAgglomeration::agglomerate(), manualGAMGProcAgglomeration::agglomerate(), masterCoarsestGAMGProcAgglomeration::agglomerate(), procFacesGAMGProcAgglomeration::agglomerate(), allocateCommunicator(), allocateCommunicator(), UPstream::communicator::communicator(), UPstream::communicator::communicator(), Foam::getCommPattern(), UPstream::communicator::reset(), and UPstream::communicator::reset().

|

static |

Create new communicator with sub-ranks on the parent communicator.

| parent | The parent communicator |

| subRanks | The sub-ranks of parent to use (ignore negative values) |

| withComponents | Call allocateCommunicatorComponents() |

Definition at line 321 of file UPstream.C.

References UList< T >::contains(), UList< T >::empty(), Foam::endl(), Foam::flatOutput(), masterNo(), Foam::nl, parRun(), Foam::Perr, and UList< T >::size().

|

static |

Duplicate the parent communicator.

Always calls dupCommunicatorComponents() internally

| parent | The parent communicator |

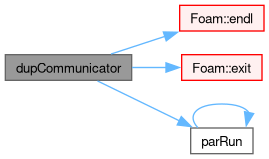

Definition at line 400 of file UPstream.C.

References Foam::endl(), Foam::exit(), Foam::FatalError, FatalErrorInFunction, FOAM_UNLIKELY, parRun(), and Foam::Perr.

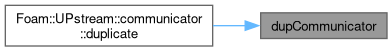

Referenced by UPstream::communicator::duplicate().

|

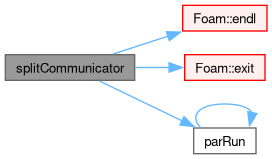

static |

Allocate a new communicator by splitting the parent communicator on the given colour.

Always calls splitCommunicatorComponents() internally

| parent | The parent communicator |

| colour | The colouring to select which ranks to include. Negative values correspond to 'ignore' |

| two_step | Use MPI_Allgather+MPI_Comm_create_group vs MPI_Comm_split |

Definition at line 438 of file UPstream.C.

References Foam::endl(), Foam::exit(), Foam::FatalError, FatalErrorInFunction, FOAM_UNLIKELY, parRun(), and Foam::Perr.

Referenced by UPstream::communicator::split().

|

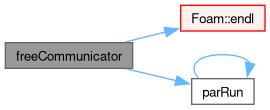

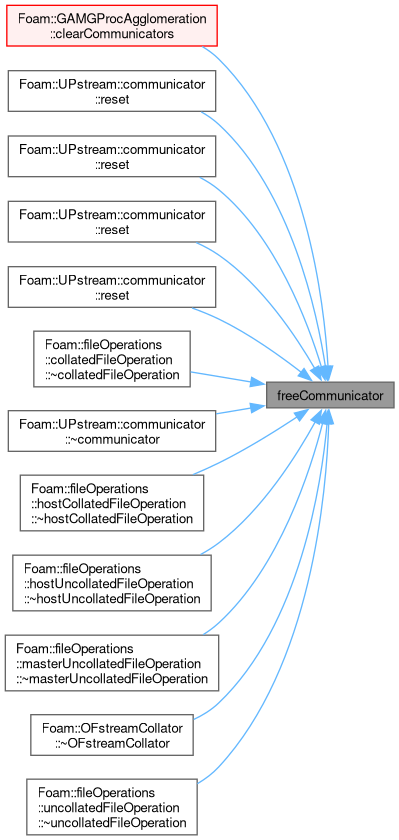

static |

Free a previously allocated communicator.

Ignores placeholder (negative) communicators.

Definition at line 621 of file UPstream.C.

References Foam::endl(), parRun(), and Foam::Perr.

Referenced by GAMGProcAgglomeration::clearCommunicators(), UPstream::communicator::reset(), UPstream::communicator::reset(), UPstream::communicator::reset(), UPstream::communicator::reset(), collatedFileOperation::~collatedFileOperation(), UPstream::communicator::~communicator(), hostCollatedFileOperation::~hostCollatedFileOperation(), hostUncollatedFileOperation::~hostUncollatedFileOperation(), masterUncollatedFileOperation::~masterUncollatedFileOperation(), OFstreamCollator::~OFstreamCollator(), and uncollatedFileOperation::~uncollatedFileOperation().

|

static |

Return physical processor number (i.e. processor number in worldComm) given communicator and processor.

Definition at line 657 of file UPstream.C.

References parent(), and procID().

Referenced by procNo().

|

static |

Return processor number in communicator (given physical processor number) (= reverse of baseProcNo).

Definition at line 670 of file UPstream.C.

References parent(), procID(), and procNo().

Referenced by procNo(), and procNo().

|

static |

Return processor number in communicator (given processor number and communicator).

Definition at line 686 of file UPstream.C.

References baseProcNo(), and procNo().

Add the valid option this type of communications library adds/requires on the command line.

Definition at line 26 of file UPstream.C.

|

static |

Initialisation function called from main.

Spawns sub-processes and initialises inter-communication

Definition at line 40 of file UPstream.C.

References Foam::endl(), Foam::exit(), Foam::FatalError, and FatalErrorInFunction.

Referenced by UPstream::commsStructList::init(), and ParRunControl::runPar().

|

static |

Special purpose initialisation function.

Performs a basic MPI_Init without any other setup. Only used for applications that need MPI communication when OpenFOAM is running in a non-parallel mode.

Definition at line 30 of file UPstream.C.

References Foam::endl(), and WarningInFunction.

Referenced by zoltanRenumber::renumber().

|

static |

Impose a synchronisation barrier (optionally non-blocking).

Definition at line 106 of file UPstream.C.

|

static |

Impose a point-to-point synchronisation barrier by sending a zero-byte "done" message to given rank.

A no-op for non-parallel

| toProcNo | The destination rank |

| communicator | The communicator index (eg, UPstream::worldComm) |

| tag | Message tag (must match on receiving side) |

Definition at line 110 of file UPstream.C.

|

static |

Impose a point-to-point synchronisation barrier by receiving a zero-byte "done" message from given rank.

A no-op for non-parallel

| fromProcNo | The source rank (negative == ANY_SOURCE) |

| communicator | The communicator index (eg, UPstream::worldComm) |

| tag | Message tag (must match on sending side) |

Definition at line 119 of file UPstream.C.

|

static |

Probe for an incoming message.

| commsType | Non-blocking or not |

| fromProcNo | The source rank (negative == ANY_SOURCE) |

| tag | The source message tag |

| communicator | The communicator index |

Definition at line 131 of file UPstream.C.

References commsType().

Referenced by decomposedBlockData::readBlocks(), and decomposedBlockData::readBlocks().

|

static |

Report the node-communication settings.

Definition at line 56 of file UPstream.C.

References nodeCommsControl_, nodeCommsMin_, nProcs(), numNodes(), os(), usingNodeComms(), and worldComm.

Referenced by argList::parse().

|

static |

Report the topology routines settings.

Definition at line 100 of file UPstream.C.

References broadcast(), gather(), os(), PrintControl, Foam::reduce(), and topologyControl_.

Referenced by argList::parse().

|

staticnoexcept |

Number of outstanding requests (on the internal list of requests).

Definition at line 54 of file UPstreamRequest.C.

References Foam::noexcept.

Referenced by volPointInterpolationAdjoint::addSeparated(), GAMGAgglomeration::agglomerateLduAddressing(), LduMatrix< Type, DType, LUType >::Amul(), lduMatrix::Amul(), motionSmootherAlgo::correctBoundaryConditions(), metisLikeDecomp::decomposeGeneral(), mapDistributeBase::distribute(), mapDistributeBase::distribute(), mapDistributeBase::distribute(), GeometricBoundaryField< Type, PatchField, GeoMesh >::evaluate(), GeometricBoundaryField< Type, PatchField, GeoMesh >::evaluate_if(), GeometricBoundaryField< Type, PatchField, GeoMesh >::evaluateLocal(), GeometricBoundaryField< Type, PatchField, GeoMesh >::evaluateSelected(), mapDistributeBase::exchangeMasks(), GAMGProcAgglomeration::globalCellCells(), lduPrimitiveMesh::globalCellCells(), calculatedProcessorFvPatchField< Type >::initEvaluate(), processorFaPatchField< Type >::initEvaluate(), processorFvPatchField< Type >::initEvaluate(), calculatedProcessorFvPatchField< Type >::initInterfaceMatrixUpdate(), calculatedProcessorGAMGInterfaceField::initInterfaceMatrixUpdate(), lduCalculatedProcessorField< Type >::initInterfaceMatrixUpdate(), processorFaPatchField< Type >::initInterfaceMatrixUpdate(), processorFaPatchField< Type >::initInterfaceMatrixUpdate(), processorFvPatchField< Type >::initInterfaceMatrixUpdate(), processorFvPatchField< Type >::initInterfaceMatrixUpdate(), processorGAMGInterfaceField::initInterfaceMatrixUpdate(), faceAreaPairGAMGAgglomeration::movePoints(), decomposedBlockData::readBlocks(), decomposedBlockData::readBlocks(), LduMatrix< Type, DType, LUType >::residual(), lduMatrix::residual(), mapDistributeBase::send(), motionSmootherAlgo::setDisplacementPatchFields(), GaussSeidelSmoother::smooth(), nonBlockingGaussSeidelSmoother::smooth(), symGaussSeidelSmoother::smooth(), TGaussSeidelSmoother< Type, DType, LUType >::smooth(), faMatrix< Type >::solve(), LUscalarMatrix::solve(), fvMatrix< Type >::solveSegregated(), syncTools::syncBoundaryFaceList(), syncTools::syncFaceList(), LduMatrix< Type, DType, LUType >::Tmul(), lduMatrix::Tmul(), distributedDILUPreconditioner::updateMatrixInterfaces(), OFstreamCollator::write(), and decomposedBlockData::writeBlocks().

|

static |

Truncate outstanding requests to given length, which is expected to be in the range [0 to nRequests()].

A no-op for out-of-range values.

Definition at line 56 of file UPstreamRequest.C.

References n.

|

static |

Transfer the (wrapped) MPI request to the internal global list and invalidate the parameter (ignores null requests).

A no-op for non-parallel

Definition at line 58 of file UPstreamRequest.C.

|

static |

Non-blocking comms: cancel and free outstanding request. Corresponds to MPI_Cancel() + MPI_Request_free().

A no-op if parRun() == false if there are no pending requests, or if the index is out-of-range (0 to nRequests)

Definition at line 60 of file UPstreamRequest.C.

Referenced by UPstream::Request::cancel().

|

static |

Non-blocking comms: cancel and free outstanding request. Corresponds to MPI_Cancel() + MPI_Request_free().

A no-op for non-parallel

Definition at line 61 of file UPstreamRequest.C.

|

static |

Non-blocking comms: cancel and free outstanding requests. Corresponds to MPI_Cancel() + MPI_Request_free().

A no-op if parRun() == false or list is empty

Definition at line 62 of file UPstreamRequest.C.

Referenced by distributedDILUPreconditioner::wait().

|

static |

Non-blocking comms: cancel/free outstanding requests (from position onwards) and remove from internal list of requests. Corresponds to MPI_Cancel() + MPI_Request_free().

A no-op if parRun() == false, if the position is out-of-range [0 to nRequests()], or the internal list of requests is empty.

| pos | starting position within the internal list of requests |

| len | length of slice to remove (negative = until the end) |

Definition at line 64 of file UPstreamRequest.C.

References Foam::pos().

|

static |

Non-blocking comms: free outstanding request. Corresponds to MPI_Request_free().

A no-op if parRun() == false

Definition at line 66 of file UPstreamRequest.C.

Referenced by UPstream::Request::free().

|

static |

Non-blocking comms: free outstanding requests. Corresponds to MPI_Request_free().

A no-op if parRun() == false or list is empty

Definition at line 67 of file UPstreamRequest.C.

|

static |

Wait until all requests (from position onwards) have finished. Corresponds to MPI_Waitall().

A no-op if parRun() == false, if the position is out-of-range [0 to nRequests()], or the internal list of requests is empty.

If checking a trailing portion of the list, it will also trim the list of outstanding requests as a side-effect. This is a feature (not a bug) to conveniently manange the list.

| pos | starting position within the internal list of requests |

| len | length of slice to check (negative = until the end) |

Definition at line 69 of file UPstreamRequest.C.

References Foam::pos().

Referenced by LduMatrix< Type, DType, LUType >::updateMatrixInterfaces(), and waitRequests().

|

static |

Wait until all requests have finished. Corresponds to MPI_Waitall().

A no-op if parRun() == false, or the list is empty.

Definition at line 70 of file UPstreamRequest.C.

|

static |

Wait until any request (from position onwards) has finished. Corresponds to MPI_Waitany().

A no-op and returns false if parRun() == false, if the position is out-of-range [0 to nRequests()], or the internal list of requests is empty.

| pos | starting position within the internal list of requests |

| len | length of slice to check (negative = until the end) |

Definition at line 72 of file UPstreamRequest.C.

References Foam::pos().

|

static |

Wait until some requests (from position onwards) have finished. Corresponds to MPI_Waitsome().

A no-op and returns false if parRun() == false, if the position is out-of-range [0 to nRequests], or the internal list of requests is empty.

| pos | starting position within the internal list of requests | |

| len | length of slice to check (negative = until the end) | |

| [out] | indices | the completed request indices relative to the starting position. This is an optional parameter that can be used to recover the indices or simply to avoid reallocations when calling within a loop. |

Definition at line 77 of file UPstreamRequest.C.

References DynamicList< T, SizeMin >::clear(), and Foam::pos().

Referenced by mapDistributeBase::distribute(), mapDistributeBase::distribute(), mapDistributeBase::distribute(), LduMatrix< Type, DType, LUType >::updateMatrixInterfaces(), and lduMatrix::updateMatrixInterfaces().

|

static |

Wait until some requests have finished. Corresponds to MPI_Waitsome().

A no-op and returns false if parRun() == false, the list is empty, or if all the requests have already been handled.

| requests | the requests | |

| [out] | indices | the completed request indices relative to the starting position. This is an optional parameter that can be used to recover the indices or simply to avoid reallocations when calling within a loop. |

Definition at line 88 of file UPstreamRequest.C.

References DynamicList< T, SizeMin >::clear().

|

static |

Wait until any request has finished and return its index. Corresponds to MPI_Waitany().

Returns -1 if parRun() == false, or the list is empty, or if all the requests have already been handled

Definition at line 98 of file UPstreamRequest.C.

|

static |

Wait until request i has finished. Corresponds to MPI_Wait().

A no-op if parRun() == false, if there are no pending requests, or if the index is out-of-range (0 to nRequests)

void Foam::UPstream::waitRequests ( UPstream::Request& req0, UPstream::Request& req1 ) { // No-op for non-parallel if (!UPstream::parRun()) { return; }

int count = 0; MPI_Request mpiRequests[2];

mpiRequests[count] = PstreamUtils::Cast::to_mpi(req0); if (MPI_REQUEST_NULL != mpiRequests[count]) { ++count; }

mpiRequests[count] = PstreamUtils::Cast::to_mpi(req1); if (MPI_REQUEST_NULL != mpiRequests[count]) { ++count; }

// Flag in advance as being handled req0 = UPstream::Request(MPI_REQUEST_NULL); req1 = UPstream::Request(MPI_REQUEST_NULL);

if (!count) { return; }

profilingPstream::beginTiming();

// On success: sets each request to MPI_REQUEST_NULL if (count == 1) { // On success: sets request to MPI_REQUEST_NULL if (MPI_Wait(mpiRequests, MPI_STATUS_IGNORE)) { FatalErrorInFunction << "MPI_Wait returned with error" << Foam::abort(FatalError); } } else // (count > 1) { // On success: sets each request to MPI_REQUEST_NULL if (MPI_Waitall(count, mpiRequests, MPI_STATUSES_IGNORE)) { FatalErrorInFunction << "MPI_Waitall returned with error" << Foam::abort(FatalError); } }

profilingPstream::addWaitTime(); }

Definition at line 103 of file UPstreamRequest.C.

Referenced by calculatedProcessorFvPatchField< Type >::evaluate(), processorFaPatchField< Type >::evaluate(), processorFvPatchField< Type >::evaluate(), calculatedProcessorFvPatchField< Type >::updateInterfaceMatrix(), calculatedProcessorGAMGInterfaceField::updateInterfaceMatrix(), lduCalculatedProcessorField< Type >::updateInterfaceMatrix(), processorFaPatchField< Type >::updateInterfaceMatrix(), processorFaPatchField< Type >::updateInterfaceMatrix(), processorFvPatchField< Type >::updateInterfaceMatrix(), processorFvPatchField< Type >::updateInterfaceMatrix(), processorGAMGInterfaceField::updateInterfaceMatrix(), UPstream::Request::wait(), and decomposedBlockData::writeBlocks().

|

static |

Wait until specified request has finished. Corresponds to MPI_Wait().

A no-op if parRun() == false or for a null-request

Definition at line 104 of file UPstreamRequest.C.

|

static |

Is request i active (!= MPI_REQUEST_NULL)?

False if there are no pending requests, or if the index is out-of-range (0 to nRequests)

Definition at line 106 of file UPstreamRequest.C.

|

static |

Is request active (!= MPI_REQUEST_NULL)?

Definition at line 107 of file UPstreamRequest.C.

|

static |

Non-blocking comms: has request i finished? Corresponds to MPI_Test().

A no-op and returns true if parRun() == false, if there are no pending requests, or if the index is out-of-range (0 to nRequests)

Definition at line 109 of file UPstreamRequest.C.

Referenced by calculatedProcessorFvPatchField< Type >::evaluate(), processorFaPatchField< Type >::evaluate(), processorFvPatchField< Type >::evaluate(), UPstream::Request::finished(), calculatedProcessorFvPatchField< Type >::ready(), lduCalculatedProcessorField< Type >::ready(), processorFaPatchField< Type >::ready(), processorFvPatchField< Type >::ready(), processorGAMGInterfaceField::ready(), calculatedProcessorFvPatchField< Type >::updateInterfaceMatrix(), calculatedProcessorGAMGInterfaceField::updateInterfaceMatrix(), lduCalculatedProcessorField< Type >::updateInterfaceMatrix(), processorFaPatchField< Type >::updateInterfaceMatrix(), processorFaPatchField< Type >::updateInterfaceMatrix(), processorFvPatchField< Type >::updateInterfaceMatrix(), processorFvPatchField< Type >::updateInterfaceMatrix(), and processorGAMGInterfaceField::updateInterfaceMatrix().

|

static |

Non-blocking comms: has request finished? Corresponds to MPI_Test().

A no-op and returns true if parRun() == false or for a null-request

Definition at line 110 of file UPstreamRequest.C.

|

static |

Non-blocking comms: have all requests (from position onwards) finished? Corresponds to MPI_Testall().

A no-op and returns true if parRun() == false, if there are no pending requests, or if the index is out-of-range (0 to nRequests) or the addressed range is empty etc.

| pos | starting position within the internal list of requests |

| len | length of slice to check (negative = until the end) |

Definition at line 112 of file UPstreamRequest.C.

References Foam::pos().

Referenced by cyclicACMIFvPatchField< Type >::ready(), cyclicACMIGAMGInterfaceField::ready(), cyclicAMIFvPatchField< Type >::ready(), and cyclicAMIGAMGInterfaceField::ready().

|

static |

Non-blocking comms: have all requests finished? Corresponds to MPI_Testall().

A no-op and returns true if parRun() == false or list is empty

Definition at line 118 of file UPstreamRequest.C.

|

static |

Non-blocking comms: have both requests finished? Corresponds to pair of MPI_Test().

A no-op and returns true if parRun() == false, if there are no pending requests, or if the indices are out-of-range (0 to nRequests) Each finished request parameter is set to -1 (ie, done).

Definition at line 124 of file UPstreamRequest.C.

Referenced by calculatedProcessorFvPatchField< Type >::all_ready(), and lduCalculatedProcessorField< Type >::all_ready().

|

static |

Non-blocking comms: wait for both requests to finish. Corresponds to pair of MPI_Wait().

A no-op if parRun() == false, if there are no pending requests, or if the indices are out-of-range (0 to nRequests) Each finished request parameter is set to -1 (ie, done).

Definition at line 132 of file UPstreamRequest.C.

|

inlinestaticnoexcept |

Set as parallel run on/off.

Definition at line 1669 of file UPstream.H.

References Foam::noexcept, and parRun().

Referenced by snappyLayerDriver::addLayers(), snappyLayerDriver::addLayersSinglePass(), masterUncollatedFileOperation::addWatch(), regIOobject::addWatch(), unwatchedIOdictionary::addWatch(), decompositionGAMGAgglomeration::agglomerate(), meshRefinement::balance(), AMIInterpolation::calcDistribution(), addPatchCellLayer::calcExtrudeInfo(), processorCyclicPolyPatch::calcGeometry(), processorFaPatch::calcGeometry(), processorPolyPatch::calcGeometry(), GlobalOffset< label >::calculate(), GlobalOffset< label >::calculate(), surfaceNoise::calculate(), call_window_allocate(), call_window_create(), FaceCellWave< Type, TrackingData >::cellToFace(), OppositeFaceCellWave< Type, TrackingData >::cellToFace(), designVariablesUpdate::checkConvergence(), faBoundaryMesh::checkParallelSync(), polyBoundaryMesh::checkParallelSync(), ZoneMesh< ZoneType, MeshType >::checkParallelSync(), AMIInterpolation::checkSymmetricWeights(), regionSplit::ClassName(), fieldValue::combineFields(), limitTurbulenceViscosity::correct(), meshRefinement::countEdgeFaces(), cyclicACMIFvsPatchField< Type >::coupled(), decompositionMethod::decompose(), simpleGeomDecomp::decompose(), metisLikeDecomp::decomposeGeneral(), conformalVoronoiMesh::decomposition(), processorFaPatch::delta(), processorFvPatch::delta(), masterUncollatedFileOperation::dirPath(), distributedTriSurfaceMesh::distribute(), fvMeshDistribute::distribute(), mapDistributeBase::distribute(), mapDistributeBase::distribute(), mapDistributeBase::distribute(), refinementHistory::distribute(), distributedTriSurfaceMesh::distributedTriSurfaceMesh(), distributedTriSurfaceMesh::distributedTriSurfaceMesh(), faMeshBoundaryHalo::distributeSparse(), snappyLayerDriver::doLayers(), snappyRefineDriver::doRefine(), dupCommunicator(), PointEdgeWave< Type, TrackingData >::edgeToPoint(), ensightSurfaceReader::ensightSurfaceReader(), calculatedProcessorFvPatchField< Type >::evaluate(), processorFaPatchField< Type >::evaluate(), processorFvPatchField< Type >::evaluate(), mapDistributeBase::exchangeMasks(), Foam::exitNow(), masterUncollatedFileOperation::filePath(), polyMesh::findCell(), distributedTriSurfaceMesh::findLine(), distributedTriSurfaceMesh::findLineAll(), distributedTriSurfaceMesh::findLineAny(), distributedTriSurfaceMesh::findNearest(), distributedTriSurfaceMesh::findNearest(), masterUncollatedFileOperation::findWatch(), freeCommunicator(), PatchTools::gatherAndMerge(), PatchTools::gatherAndMerge(), GenericPatchWriter< indirectPrimitivePatch >::GenericPatchWriter(), GenericPatchWriter< indirectPrimitivePatch >::GenericPatchWriter(), ensightSurfaceReader::geometry(), Foam::getCommPattern(), zoneDistribute::getDatafromOtherProc(), distributedTriSurfaceMesh::getField(), masterUncollatedFileOperation::getFile(), distributedTriSurfaceMesh::getNormal(), distributedTriSurfaceMesh::getRegion(), exprResult::getResult(), masterUncollatedFileOperation::getState(), distributedTriSurfaceMesh::getVolumeType(), GAMGProcAgglomeration::globalCellCells(), faMeshBoundaryHalo::haloSize(), InflationInjection< CloudType >::InflationInjection(), faMesh::init(), calculatedProcessorFvPatchField< Type >::initEvaluate(), processorFaPatchField< Type >::initEvaluate(), processorFvPatchField< Type >::initEvaluate(), processorFaPatch::initGeometry(), processorPolyPatch::initGeometry(), InjectedParticleDistributionInjection< CloudType >::initialise(), InjectedParticleInjection< CloudType >::initialise(), extractEulerianParticles::initialiseBins(), processorPolyPatch::initOrder(), processorCyclicPointPatchField< Type >::initSwapAddSeparated(), processorFaPatch::initUpdateMesh(), processorPolyPatch::initUpdateMesh(), internalMeshWriter::internalMeshWriter(), internalMeshWriter::internalMeshWriter(), internalWriter::internalWriter(), internalWriter::internalWriter(), fileOperation::isIOrank(), isoAdvection::isoAdvection(), FaceCellWave< Type, TrackingData >::iterate(), PointEdgeWave< Type, TrackingData >::iterate(), lagrangianWriter::lagrangianWriter(), lagrangianWriter::lagrangianWriter(), lineWriter::lineWriter(), lineWriter::lineWriter(), masterUncollatedFileOperation::localObjectPath(), fileOperation::lookupAndCacheProcessorsPath(), LUscalarMatrix::LUscalarMatrix(), patchMeanVelocityForce::magUbarAve(), processorFaPatch::makeCorrectionVectors(), processorFaPatch::makeDeltaCoeffs(), processorFaPatch::makeLPN(), processorFaPatch::makeNonGlobalPatchPoints(), processorFaPatch::makeWeights(), processorFvPatch::makeWeights(), error::master(), mergedSurf::merge(), surfaceWriter::merge(), surfaceWriter::mergeFieldTemplate(), polyBoundaryMesh::neighbourEdges(), processorTopology::New(), newCommunicator(), newCommunicator(), masterUncollatedFileOperation::NewIFstream(), processorPolyPatch::order(), fieldMinMax::output(), InflationInjection< CloudType >::parcelsToInject(), parRun(), patchMeshWriter::patchMeshWriter(), patchMeshWriter::patchMeshWriter(), patchWriter::patchWriter(), patchWriter::patchWriter(), polyWriter::polyWriter(), polyWriter::polyWriter(), RecycleInteraction< CloudType >::postEvolve(), powerLawLopesdaCostaZone::powerLawLopesdaCostaZone(), meshRefinement::printMeshInfo(), processorTopology::procAdjacency(), singleDirectionUniformBin::processField(), uniformBin::processField(), collatedFileOperation::processorsDir(), triangulatedPatch::randomGlobalPoint(), masterUncollatedFileOperation::read(), uncollatedFileOperation::read(), surfaceNoise::read(), lumpedPointState::readData(), Time::readModifiedObjects(), masterUncollatedFileOperation::readStream(), surfaceNoise::readSurfaceData(), masterUncollatedFileOperation::removeWatch(), parProfiling::report(), AMIWeights::reportPatch(), faMeshBoundaryHalo::reset(), fvMeshSubset::reset(), GlobalOffset< label >::reset(), cyclicAMIPolyPatch::resetAMI(), mapDistributeBase::schedule(), Time::setControls(), foamReport::setStaticBuiltins(), masterUncollatedFileOperation::setUnmodified(), zoneDistribute::setUpCommforZone(), globalMeshData::sharedPoints(), shortestPathSet::shortestPathSet(), error::simpleExit(), LUscalarMatrix::solve(), TDACChemistryModel< psiReactionThermo, constGasHThermoPhysics >::solve(), splitCommunicator(), messageStream::stream(), surfaceWriter::surface(), surfaceNoise::surfaceAverage(), surfaceFieldWriter::surfaceFieldWriter(), surfaceFieldWriter::surfaceFieldWriter(), surfaceWriter::surfaceWriter(), surfaceWriter::surfaceWriter(), surfaceWriter::surfaceWriter(), processorCyclicPointPatchField< Type >::swapAddSeparated(), syncTools::swapBoundaryCellList(), syncTools::swapBoundaryCellPositions(), syncTools::swapBoundaryFaceList(), syncTools::swapBoundaryFacePositions(), syncTools::swapFaceList(), masterUncollatedFileOperation::sync(), syncObjects::sync(), syncTools::syncBoundaryFaceList(), syncTools::syncEdgeMap(), syncTools::syncFaceList(), syncTools::syncFaceList(), syncTools::syncFacePositions(), faMesh::syncGeom(), syncTools::syncPointMap(), triSurfaceMesh::triSurfaceMesh(), triSurfaceMesh::triSurfaceMesh(), abaqusWriter::TypeNameNoDebug(), boundaryDataWriter::TypeNameNoDebug(), debugWriter::TypeNameNoDebug(), ensightWriter::TypeNameNoDebug(), foamWriter::TypeNameNoDebug(), nastranWriter::TypeNameNoDebug(), nullWriter::TypeNameNoDebug(), proxyWriter::TypeNameNoDebug(), rawWriter::TypeNameNoDebug(), starcdWriter::TypeNameNoDebug(), vtkWriter::TypeNameNoDebug(), x3dWriter::TypeNameNoDebug(), fileOperation::uniformFile(), mapDistributeBase::unionCombineMasks(), turbulentDFSEMInletFvPatchVectorField::updateCoeffs(), calculatedProcessorFvPatchField< Type >::updateInterfaceMatrix(), faMesh::updateMesh(), processorFaPatch::updateMesh(), processorPolyPatch::updateMesh(), fileOperation::updateStates(), masterUncollatedFileOperation::updateStates(), dynamicCode::waitForFile(), energySpectrum::write(), viewFactorHeatFlux::write(), ParticleZoneInfo< CloudType >::write(), Foam::vtk::writeCellSetFaces(), meshToMeshMethod::writeConnectivity(), Foam::ensightOutput::writeFaceConnectivity(), Foam::ensightOutput::writeFaceConnectivity(), Foam::vtk::writeFaceSet(), AMIWeights::writeFileHeader(), fieldMinMax::writeFileHeader(), isoAdvection::writeIsoFaces(), collatedFileOperation::writeObject(), Foam::vtk::writePointSet(), surfaceNoise::writeSurfaceData(), topOVariablesBase::writeSurfaceFiles(), streamLineBase::writeToFile(), and Foam::vtk::writeTopoSet().

|

inlinestaticnoexcept |

Test if this a parallel run.

Modify access is deprecated

Definition at line 1681 of file UPstream.H.

References Foam::noexcept.

Referenced by regIOobject::addWatch(), masterUncollatedFileOperation::addWatches(), waveMethod::calculate(), cyclicAMIPolyPatch::canResetAMI(), calculatedProcessorFvPatchField< Type >::coupled(), cyclicACMIFvPatch::coupled(), cyclicAMIFvPatch::coupled(), cyclicAMIPointPatch::coupled(), processorCyclicFvsPatchField< Type >::coupled(), processorCyclicPointPatchField< Type >::coupled(), processorFaePatchField< Type >::coupled(), processorFaPatch::coupled(), processorFaPatchField< Type >::coupled(), processorFvPatch::coupled(), processorFvPatchField< Type >::coupled(), processorFvsPatchField< Type >::coupled(), processorPointPatchField< Type >::coupled(), processorPolyPatch::coupled(), masterUncollatedFileOperation::dirPath(), fvMeshDistribute::distribute(), masterUncollatedFileOperation::filePath(), masterUncollatedFileOperation::findInstance(), masterUncollatedFileOperation::findTimes(), decompositionMethod::nDomains(), fvMeshTools::newMesh(), argList::parse(), masterUncollatedFileOperation::read(), uncollatedFileOperation::read(), regIOobject::readHeaderOk(), masterUncollatedFileOperation::readObjects(), profilingPstream::report(), surfaceWriter::setSurface(), surfaceWriter::setSurface(), ensightFaces::uniqueMeshPoints(), ensightCells::write(), and ensightFaces::write().

|

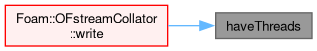

inlinestaticnoexcept |

Have support for threads.

Definition at line 1686 of file UPstream.H.

References Foam::noexcept.

Referenced by OFstreamCollator::write().

|

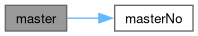

inlinestaticconstexprnoexcept |

Relative rank for the master process - is always 0.

Definition at line 1691 of file UPstream.H.

References Foam::noexcept.

Referenced by bitSet::broadcast(), broadcast(), broadcast(), AMIInterpolation::calcDistribution(), Foam::createReconstructMap(), metisLikeDecomp::decomposeGeneral(), lduPrimitiveMesh::gather(), decomposedBlockData::gatherProcData(), is_rank(), is_subrank(), LUscalarMatrix::LUscalarMatrix(), master(), newCommunicator(), newCommunicator(), masterUncollatedFileOperation::NewIFstream(), argList::parse(), masterUncollatedFileOperation::read(), UIPstream::read(), decomposedBlockData::readBlocks(), decomposedBlockData::readBlocks(), masterUncollatedFileOperation::readHeader(), mapDistributeBase::schedule(), globalMeshData::sharedPoints(), LUscalarMatrix::solve(), syncTools::syncEdgeMap(), syncTools::syncPointMap(), UIPBstream::UIPBstream(), UOPBstream::UOPBstream(), UOPstreamBase::UOPstreamBase(), energySpectrum::write(), OFstreamCollator::write(), decomposedBlockData::writeBlocks(), Foam::ensightOutput::writeFaceConnectivity(), and Foam::ensightOutput::writeFaceConnectivity().

|

inlinestatic |

Number of ranks in parallel run (for given communicator). It is 1 for serial run.

Definition at line 1697 of file UPstream.H.

References worldComm.